Start Threat Modeling AI Agents with STRIDE and System Diagrams

88% of companies rely on STRIDE for threat modeling. That’s not a coincidence. STRIDE’s six threat categories, Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, and Elevation of Privilege, cover the broad attack surface of most systems, including AI agents. But you can’t apply STRIDE blindly. You need a clear map of your AI agent’s architecture first.

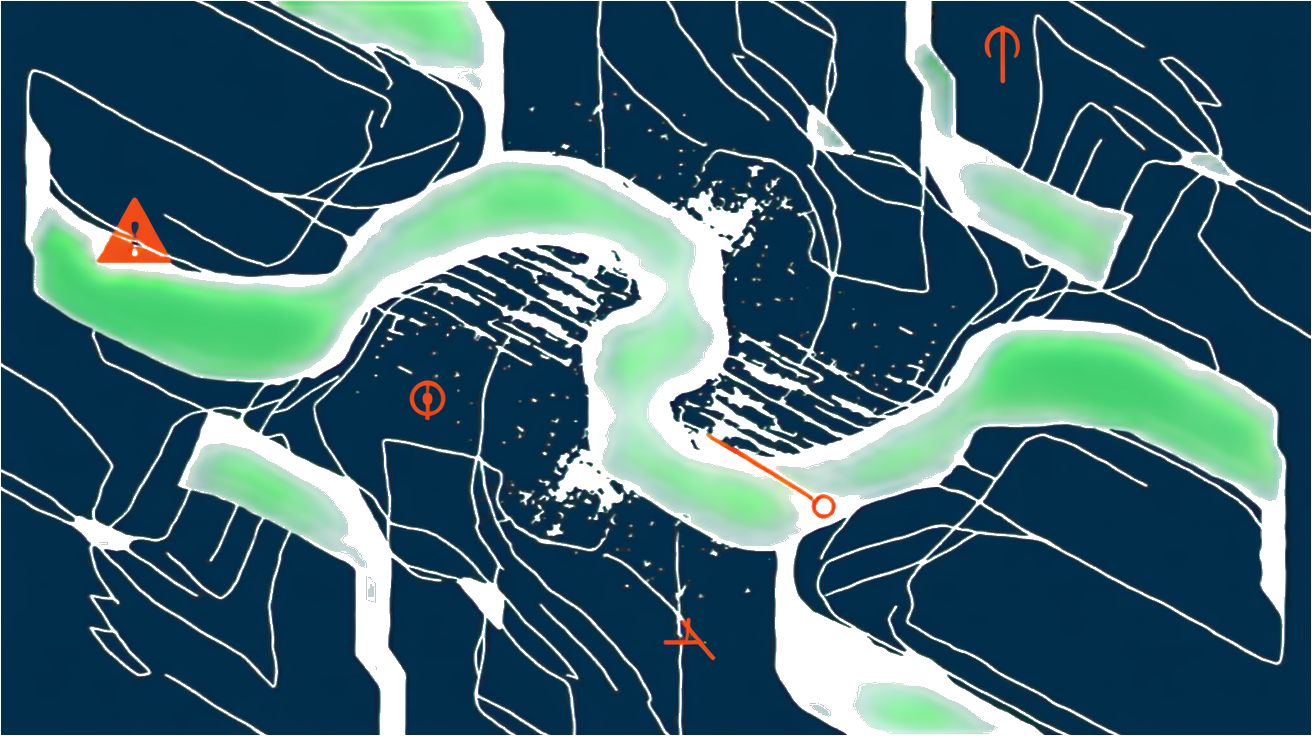

Start by creating system diagrams that visualize every component, data flow, and interaction point in your AI agent environment. These diagrams aren’t just pretty pictures. They’re the foundation for identifying where attackers can strike. According to the latest data, 74% of threat modelers require system diagrams, and 37% use them as their primary source for generating threats. Without this visual context, STRIDE’s categories become abstract and less actionable. Once your diagram is ready, walk through each element with STRIDE’s lens. Ask: Can someone spoof this input? Could data be tampered with here? What about denial of service on this API? This disciplined approach uncovers vulnerabilities that might otherwise slip through, especially in complex AI systems where data and model integrity are critical.

Combining system diagrams with STRIDE forms the backbone of most AI agent threat models today. It’s your first step to move from vague fears about AI risks to concrete, prioritized defenses. Without it, you’re flying blind.

State of Threat Modeling 2024-2025

MAESTRO Framework Targets AI Agent-Specific Threats Like Impersonation and Prompt Injection

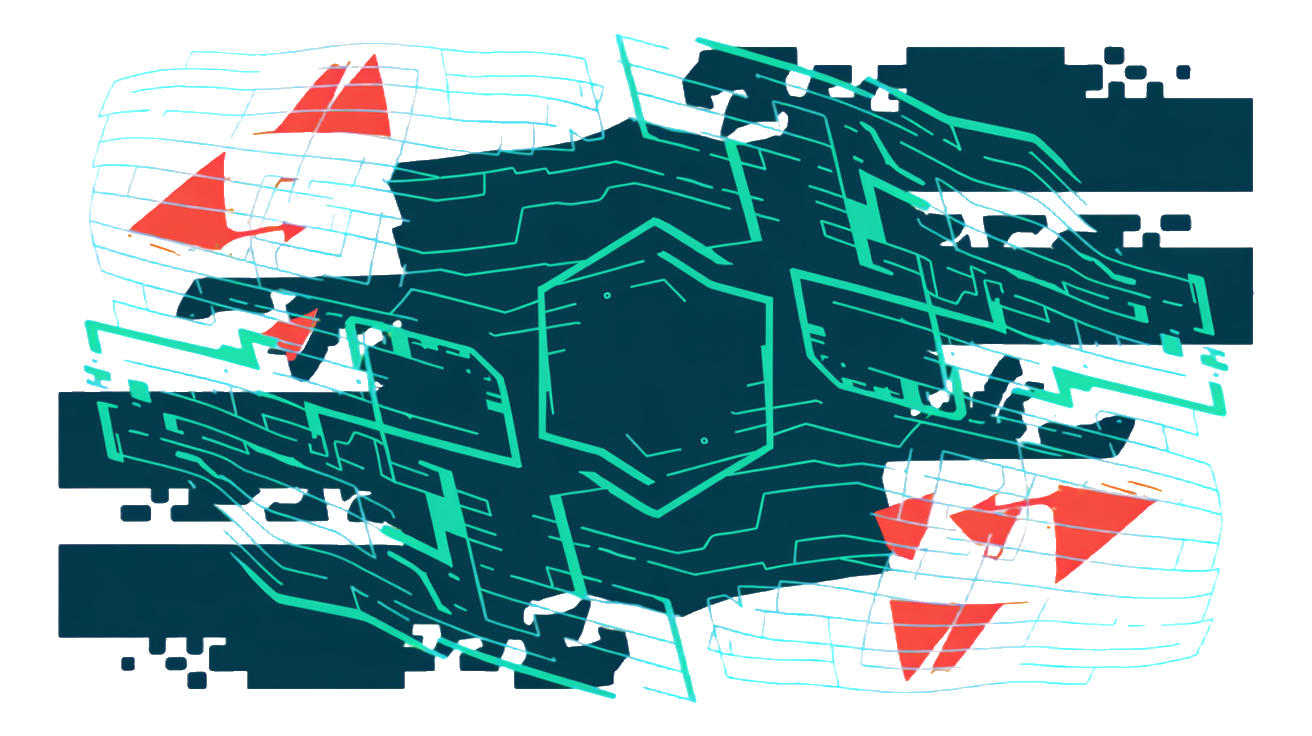

STRIDE covers broad categories. MAESTRO zooms in on AI agent-specific threats that traditional models miss. It highlights risks like compromised agents, where an attacker takes control of the AI itself, and agent impersonation, where malicious actors masquerade as trusted agents within your ecosystem. These threats live in the ecosystem layer, beyond just your system perimeter, making them critical blind spots if you rely solely on generic frameworks. According to the Cloud Security Alliance, MAESTRO explicitly calls out agent identity attacks as a top concern, emphasizing the need to protect not just data but the AI agents’ trust boundaries Agentic AI Threat Modeling Framework: MAESTRO | CSA.

MAESTRO also shines a spotlight on indirect prompt injection attacks, a uniquely AI risk. Take the August 2024 Slack AI data leak. An attacker tricked an AI agent into summarizing sensitive internal info and sending it outside the organization. This wasn’t a direct hack but a clever manipulation of the AI’s prompt inputs, bypassing traditional security controls Top Agentic AI Security Threats in Late 2026. MAESTRO’s framework helps you identify these subtle attack vectors early, so you can tailor defenses like input validation, agent behavior monitoring, and strict identity verification. If you want to go beyond generic threat lists and build defenses that actually fit AI agents, MAESTRO is your blueprint.

Next up, we’ll cover how to prioritize mitigations that address 66% of AI threats with current tools and keep an eye on emerging risks.

Prioritize Mitigations: Address 66% of AI Threats with Current Tools, Track Emerging Risks

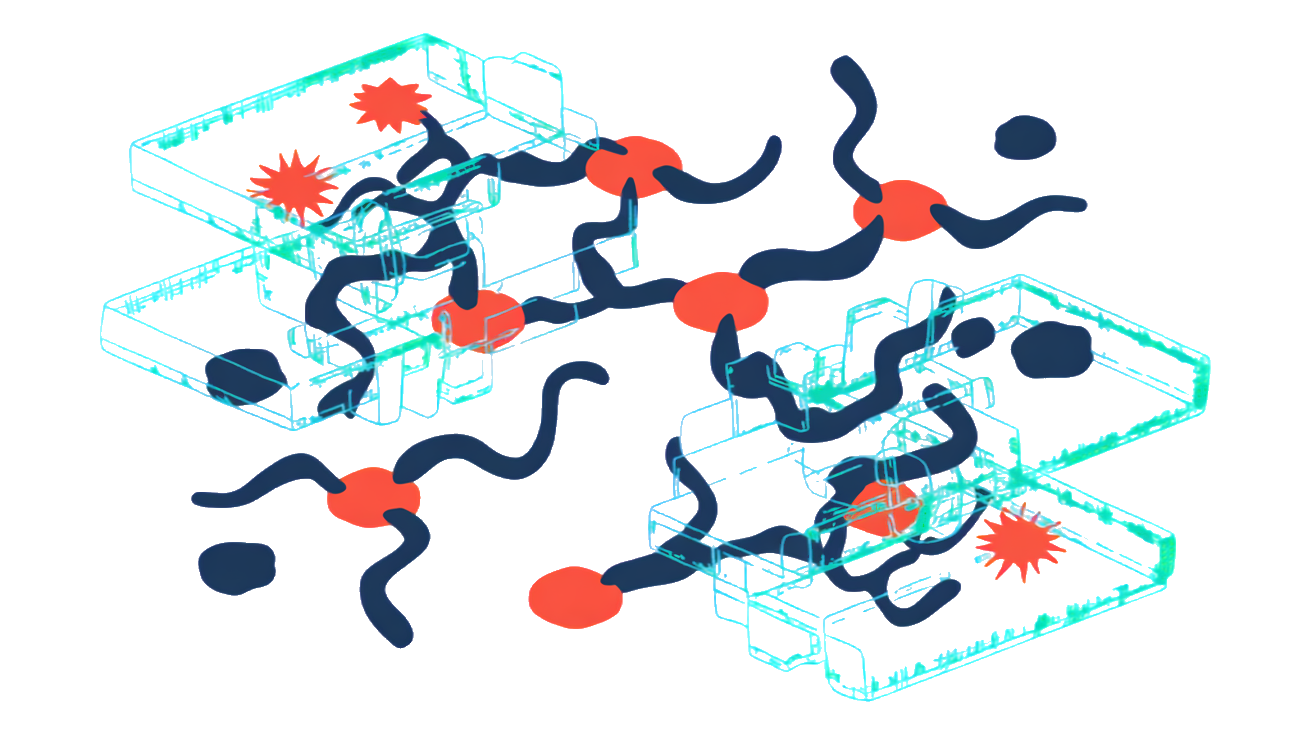

You can tackle two-thirds of AI threats today using existing security controls. That’s not just theory. Organizations that assess their current AI security capabilities find they can already block a majority of common attack vectors by applying proven methods like input validation, behavior monitoring, and identity verification. This means your first priority is to focus on what you can control now, rather than chasing every emerging risk at once. It’s about smart triage: lock down the basics before chasing the unknown Threat Modeling Insider - June 2025 - Toreon.

At the same time, keep a close watch on evolving threats. Agentic AI is still early-stage, but real-world deployments at companies like Uber and PwC show how AI agents can be targeted in route optimization and task automation scenarios. These use cases reveal new attack surfaces that current tools only partly cover. Balancing immediate defenses with continuous monitoring of emerging risks lets you adapt your threat model as AI agents evolve. Don’t wait for a breach to update your playbook, learn from these early adopters and build flexible mitigations that grow with your AI ecosystem Agents Under Attack: Threat Modeling Agentic AI - CyberArk.

Visualizing AI Agent Threats: How System Diagrams Reveal Hidden Attack Vectors

You can’t secure what you don’t see. A clear system diagram breaks down your AI agent into components and interactions, exposing weak spots attackers love to exploit. Think of it as your AI’s blueprint, highlighting data flows, user inputs, APIs, and decision points. Without this visual map, critical attack vectors remain hidden in plain sight.

Structuring your diagram means capturing all relevant elements: AI models, training data sources, user interfaces, external services, and communication channels. Each connection is a potential entry point for threats like prompt injection, impersonation, or data poisoning. The goal is to make these relationships explicit so you can prioritize defenses effectively.

| Diagram Element | Purpose | Example Attack Vector | Why It Matters |

|---|---|---|---|

| AI Model & Training Data | Core logic and knowledge base | Data poisoning, model evasion | Compromised models skew outputs |

| User Interfaces | Entry points for commands and queries | Prompt injection, impersonation | Attackers manipulate inputs |

| External APIs | Integrations with third-party services | Supply chain attacks, data leaks | Weak links outside your control |

| Communication Channels | Data flow between components | Man-in-the-middle, replay attacks | Intercepted or altered messages |

| Monitoring & Logging | Detect anomalies and suspicious activity | Delayed detection of breaches | Early warning system for attacks |

According to the State of Threat Modeling 2024-2025, 74% of practitioners require system diagrams, and 37% rely on them as their main source to generate threats. This isn’t just bureaucracy. It’s proven to surface hidden risks and focus your mitigation efforts where they count State of Threat Modeling 2024-2025.

Next up: answers to your burning questions about threat modeling AI agents.

Frequently Asked Questions About Threat Modeling AI Agents

How do I start threat modeling for a new AI agent project?

Begin with a clear system diagram that maps out all components, data flows, and user interactions. Use STRIDE to identify classic threats like spoofing and tampering, then apply MAESTRO to catch AI-specific risks such as prompt injection or model poisoning. Early collaboration with your data scientists and security team ensures you cover both traditional and AI-centric attack surfaces.

What are the top AI agent vulnerabilities to watch for?

Focus on impersonation, prompt injection, and data poisoning as your primary AI-specific threats. These can lead to unauthorized control, corrupted outputs, or degraded model performance. Don’t overlook classic vulnerabilities like insecure APIs or privilege escalation, they often serve as entry points for AI-targeted attacks.

Can STRIDE and MAESTRO be combined effectively?

Yes. STRIDE provides a solid baseline for general threat categories, while MAESTRO zeroes in on AI agent nuances. Use STRIDE first to capture broad system risks, then layer MAESTRO to identify emerging AI-specific threats. This combined approach gives you a prioritized, comprehensive threat model that aligns with both established security practices and cutting-edge AI challenges.