MLflow vs. Weights & Biases: Core Features That Define AI Experiment Tracking

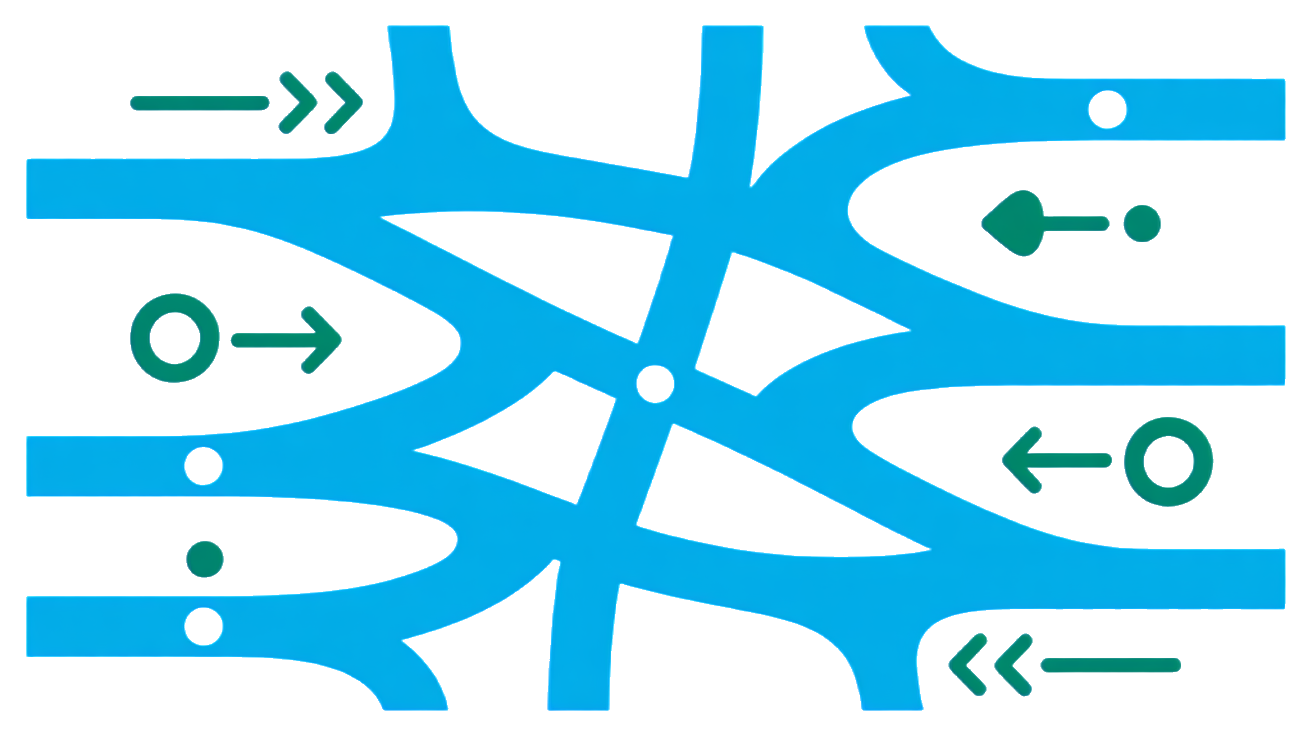

Imagine tracking hundreds of AI experiments without losing control over your data or drowning in setup complexity. That’s the daily reality for AI teams choosing between MLflow’s open-source robustness and Weights & Biases’ slick user experience.

MLflow’s Open-Source Power and Scalability

MLflow is the go-to open-source platform for AI experiment tracking, boasting over 30 million monthly downloads and adoption by thousands of organizations worldwide. It excels at handling large-scale projects with complex datasets, offering detailed logging of metrics like prediction means, standard deviations, and unique predictions for batch datasets. Its modular architecture supports experiment tracking, model packaging, and deployment, making it a one-stop shop for production-quality AI workflows. The open-source nature means you can customize and extend MLflow to fit your infrastructure, avoiding vendor lock-in.

W&B’s Rapid Setup and Visualization Strengths

Weights & Biases (W&B) shines in ease of use and visualization. You can start tracking experiments with just 5 lines of code, instantly logging hyperparameters, inputs, and outputs. Its cloud-based platform offers rich, interactive dashboards that make comparing model runs intuitive, even for non-experts. W&B’s focus on user experience accelerates onboarding and collaboration across teams, especially in fast-paced environments where quick insights matter more than deep customization.

Beyond Basics: What Each Tool Excels At

| Feature | MLflow | Weights & Biases (W&B) |

|---|---|---|

| Adoption (2025) | 57% of AI teams Saucedo | 8% of AI teams Saucedo |

| Setup Complexity | Moderate, requires infrastructure setup | Minimal, plug-and-play with 5 lines of code Contrary Research |

| Customization | High, open-source extensibility | Limited, focused on out-of-the-box features |

| Visualization | Basic, relies on integrations | Advanced, interactive dashboards |

| Scalability | Enterprise-ready, handles large datasets | Cloud-based, optimized for team collaboration |

| **Integration |

57% of AI Teams Use MLflow in 2025, But W&B Leads in Innovation and Ease of Use

MLflow’s Explosive Growth and Open-Source Dominance

MLflow’s adoption surged to 57% of AI teams in 2025, up from 42% just a year earlier. This explosive growth cements its position as the dominant open-source experiment tracking platform Alejandro Saucedo. Its modular design and extensibility make it a natural fit for teams that want full control over their AI workflows without vendor lock-in. The open-source community keeps pushing MLflow’s capabilities, ensuring it scales with enterprise needs and integrates with diverse ML pipelines.

This widespread adoption isn’t just about numbers. It reflects a broader shift toward robust, customizable tooling that can handle complex experiments at scale. MLflow’s ability to log detailed metrics and package models for deployment makes it a cornerstone for production-ready AI systems. For teams prioritizing flexibility and infrastructure control, MLflow remains the clear choice.

W&B’s Market Leadership and User-Friendly Design

Weights & Biases holds a smaller but significant 8% market share in 2025, edging up from 7% in 2024 Alejandro Saucedo. Despite its smaller footprint, W&B was voted Market and Innovation Leader in the 2026 IT Brand Pulse AI Brand Leader survey Weights & Biases Brand Leader. Its strength lies in ease of use and rapid onboarding. Teams can start tracking experiments with minimal setup, benefiting from rich, interactive dashboards that make insights accessible to all stakeholders.

W&B’s cloud-native platform accelerates collaboration and iteration, especially in fast-moving environments. Its focus on user experience and visualization innovation keeps it competitive, even as MLflow dominates adoption. For teams valuing speed and simplicity, W&B is often the preferred tool.

The Decline of Spreadsheets in Experiment Tracking

Spreadsheets are dying out as a serious experiment tracking tool. Usage dropped sharply from 10% in 2024 to just 3% in 2025 Alejandro Saucedo. The reason is simple: manual tracking can’t keep up with the complexity and scale of modern AI projects. Spreadsheets lack automation, version control, and integration with your training pipelines. This leads to lost context, errors, and wasted time digging through rows of data.

If your team still relies on spreadsheets, you’re missing out on reliable, scalable experiment tracking that supports collaboration, reproducibility, and compliance. Moving to platforms like MLflow or Weights & Biases means your experiments are logged automatically, with rich metadata, metrics, and artifacts. This shift is no longer optional but critical to keep pace with AI maturity and governance demands.

Step-by-Step Migration Guide

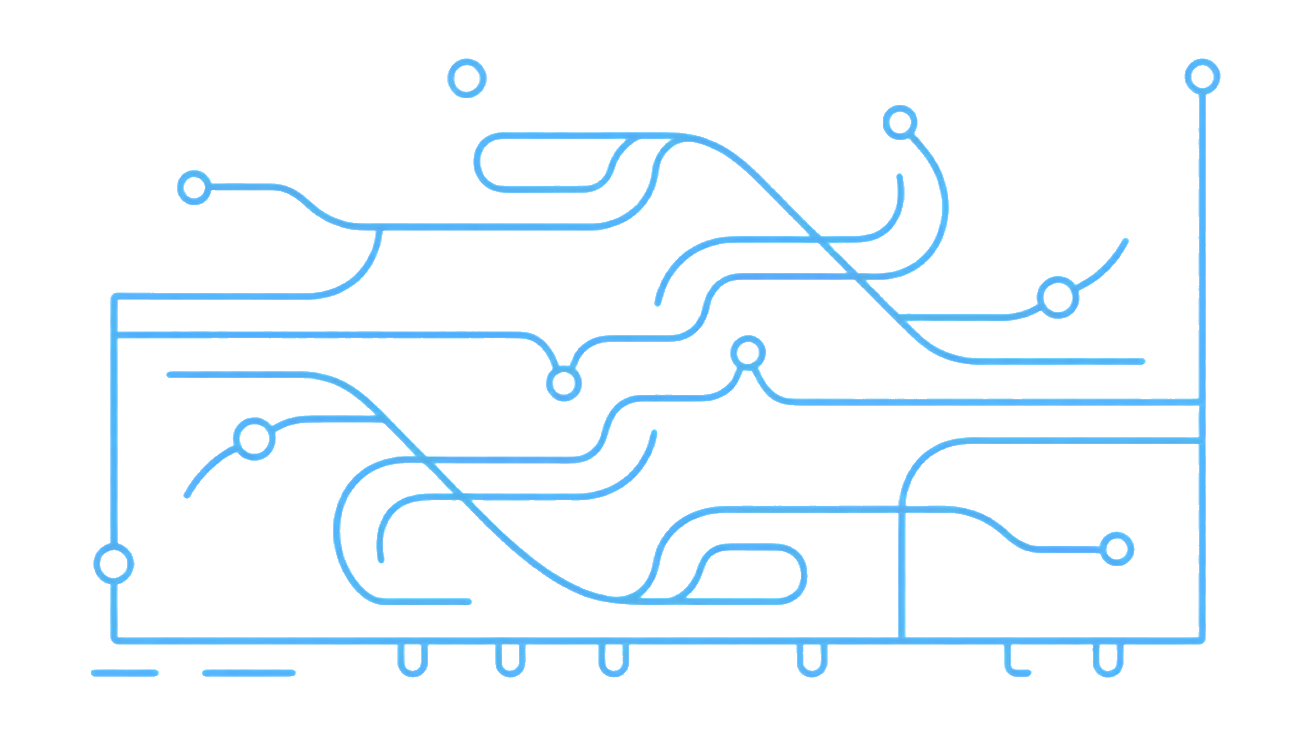

Start small. Pick a pilot project and integrate experiment tracking into your existing pipeline. First, install your chosen tool’s SDK. For MLflow, it’s as simple as pip install mlflow. For W&B, pip install wandb. Next, modify your training script to log key parameters, metrics, and artifacts. Run experiments and verify that logs appear in the tracking UI.

Gradually expand tracking to more projects and teams. Define standard metrics and tags to ensure consistency. Automate logging in CI/CD pipelines to capture every run. Finally, train your team on interpreting dashboards and using tracking data to optimize models. This phased approach minimizes disruption and builds confidence.

Code Snippets to Kickstart MLflow and W&B Tracking

Here’s a minimal example to get you started quickly.

MLflow example:

import mlflow

with mlflow.start_run():

mlflow.log_param("learning_rate", 0.01)

mlflow.log_metric("accuracy", 0.92)

# Your training code hereWeights & Biases example:

import wandb

wandb.init(project="my-ai-project")

wandb.config.learning_rate = 0.01

# Your training code here

wandb.log({"accuracy": 0.92})

wandb.finish()W&B’s setup takes just 5 lines of code to enable comprehensive tracking, comparison, and visualization [Report: Weights & Biases Business Breakdown & Founding Story](

Frequently Asked Questions

What are the main benefits of switching from spreadsheets to MLflow or W&B?

Spreadsheets can quickly become a mess when tracking AI experiments. MLflow and W&B offer structured, automated tracking that eliminates manual errors and saves time. They provide rich visualizations, version control, and easy comparison across runs, which spreadsheets simply cannot match. Plus, these tools integrate directly with your code and infrastructure, making experiment management scalable and repeatable.

How much coding effort is required to start tracking experiments with W&B?

Getting started with W&B is surprisingly lightweight. You typically need to add just a few lines of code to your existing training scripts. This minimal setup unlocks powerful features like real-time logging, dashboarding, and collaboration. The learning curve is gentle, especially if you already use Python, so you can focus on your models instead of building tracking from scratch.

Can MLflow handle large-scale production AI model monitoring?

MLflow is designed with scalability in mind and can support production-grade model lifecycle management. It tracks experiments, manages model versions, and integrates with deployment pipelines. While it excels in experiment tracking and model registry, for continuous monitoring in production environments, you might need to combine it with specialized monitoring tools tailored to your infrastructure and performance needs.