Why 87% of AI Errors Are Hallucinations, and Why Observability Is Your Best Defense

Imagine your AI system confidently delivering completely wrong answers. This isn’t a rare glitch. It’s the dominant source of critical AI failures today: hallucinations. These are outputs where the AI invents facts, fabricates data, or confidently asserts falsehoods. The problem? They slip past manual checks because they look plausible and often require domain expertise to spot.

Manual detection simply cannot keep up with the speed and scale of modern AI deployments. Teams drown in false positives or miss subtle hallucinations lurking in complex outputs. That’s where observability steps in. By integrating real-time monitoring, tracing, and alerting directly into AI pipelines, observability tools offer a scalable, automated defense. They don’t just flag errors after the fact. They provide continuous insight into the AI’s decision-making process, enabling early detection of hallucinations before they cause damage. Observability transforms AI reliability from reactive firefighting into proactive risk management, making your AI outputs trustworthy and auditable at scale.

What Exactly Are AI Hallucinations? Defining the Problem Before Detection

AI hallucinations are not just simple mistakes. They are confidently wrong outputs where the AI generates information that is fabricated, misleading, or outright false. Unlike typical bugs or errors, hallucinations appear plausible and often mimic human-like reasoning or factual knowledge. This makes them especially dangerous because they can easily slip past casual review, fooling users and even domain experts. The AI isn’t uncertain or hesitant, it asserts falsehoods with conviction.

Traditional error detection methods struggle here. Rule-based checks or keyword filters catch obvious mistakes but fail against subtle fabrications embedded in complex responses. Manual review is slow and inconsistent, unable to scale with the volume and velocity of AI-generated content today. Without deeper insight into the AI’s internal reasoning or data provenance, these hallucinations remain invisible until they cause real harm. That’s why defining hallucinations clearly is crucial: they are not just errors but deceptive outputs that evade conventional detection, demanding a new approach rooted in continuous observability and automated analysis. This sets the stage for why observability is becoming essential in AI pipelines. For a deeper dive into how teams are overcoming these barriers, see AI Observability: How 1,340 Teams Overcame Barriers.

Automated Observability Techniques That Catch AI Hallucinations in Real Time

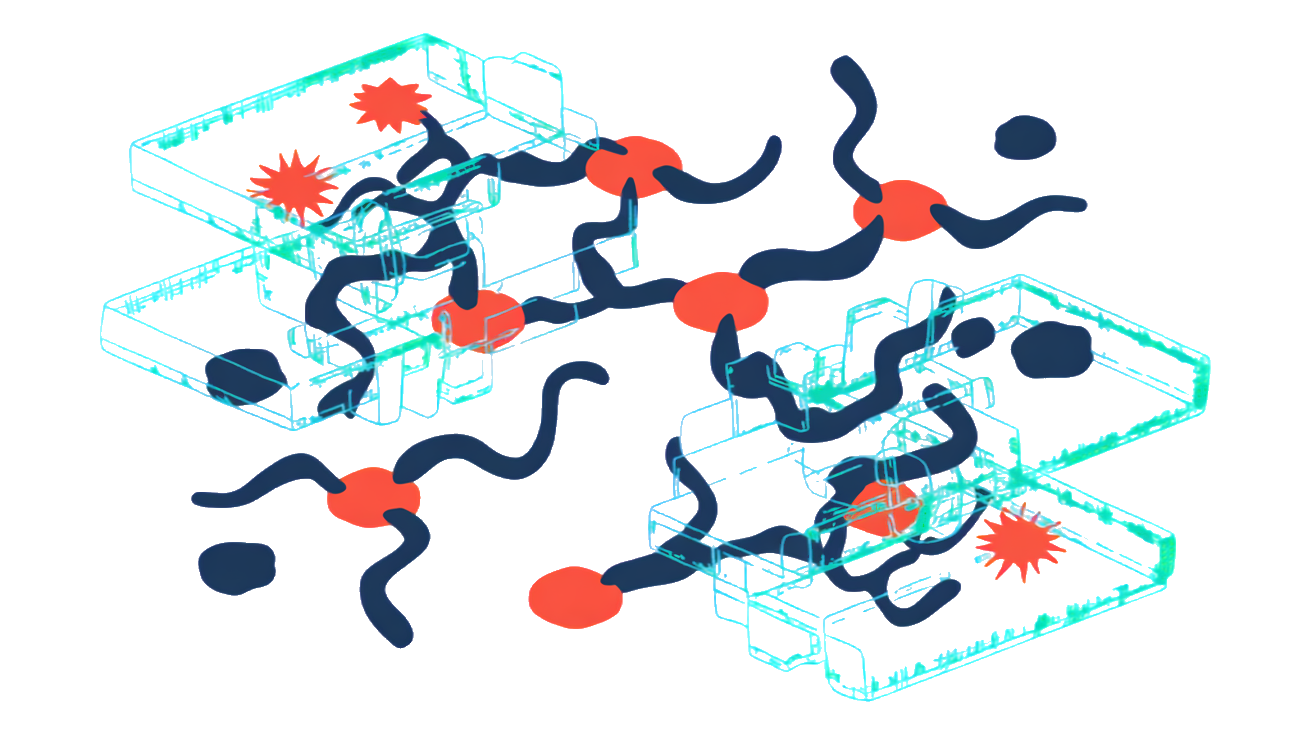

Detecting AI hallucinations as they happen requires a mix of observability techniques, each with unique strengths and trade-offs. Some methods focus on the AI’s outputs directly, while others analyze internal signals or statistical patterns. The right combination depends on your model, use case, and risk tolerance.

Here’s a quick comparison of four common automated observability approaches for spotting hallucinations in real time:

| Technique | What It Monitors | Strengths | Trade-Offs |

|---|---|---|---|

| Output Monitoring | Generated text or responses | Simple to implement, directly tied to output quality | May miss subtle or context-dependent errors |

| Embedding Similarity | Semantic closeness of outputs | Captures meaning shifts, good for detecting off-topic content | Requires reference embeddings, sensitive to embedding quality |

| Uncertainty Quantification | Model confidence scores | Provides probabilistic signals of doubt, useful for risk-aware decisions | Calibration can be tricky, not always reliable alone |

| Anomaly Detection | Statistical deviations in features or outputs | Detects unusual patterns beyond predefined rules | Needs large baseline data, can generate false positives |

No single method catches every hallucination. Combining these observability techniques creates a more robust, automated defense. You get real-time alerts when outputs deviate from expected norms, enabling faster intervention and higher trust in AI systems.

5 Concrete Metrics and Signals to Spot AI Hallucinations Automatically

-

Confidence Scores

Most AI models output a confidence or probability score with each prediction. Sudden drops or abnormally low confidence can signal hallucinations. But watch out, confidence alone isn’t foolproof. Combine it with other signals to avoid false alarms. -

Semantic Drift

Track how the meaning of generated text shifts over time or across related queries. If the AI veers off-topic or contradicts earlier statements, that’s a red flag. Embedding-based similarity measures help quantify this drift automatically. -

Response Consistency

Run the same prompt multiple times or slightly rephrased inputs through the model. Inconsistent answers often indicate hallucinations. This metric leverages the model’s own variability as a diagnostic tool. -

External Knowledge Cross-Checks

Automatically verify AI outputs against trusted databases or APIs. Mismatches or unverifiable claims suggest hallucinations. This requires integrating external data sources into your observability pipeline. -

Latency Spikes

Unexpected increases in response time can correlate with complex or uncertain reasoning steps where hallucinations are more likely. Monitoring latency alongside output quality adds another layer of detection.

Together, these signals form a practical toolkit. They enable automated, real-time detection of hallucinations, reducing risk and improving trust without manual review bottlenecks.

Embedding Observability in AI Pipelines: Code Snippets and Workflow Examples

Integrating observability hooks directly into your AI inference pipeline is the key to catching hallucinations as they happen. The idea is simple: instrument every step where the model generates or processes output, then feed those signals into monitoring tools that trigger alerts when anomalies arise. This creates a continuous feedback loop that flags potential hallucinations without slowing down your pipeline.

Here’s a minimal example in Python using a hypothetical observability SDK. It wraps the inference call, logs latency, confidence scores, and output embeddings, then sends alerts if any metric crosses a threshold:

from observability_sdk import monitor_metric, send_alert

def infer_with_observability(model, input_data):

monitor_metric("input_size", len(input_data))

start_time = time.time()

output = model.generate(input_data)

latency = time.time() - start_time

monitor_metric("latency", latency)

monitor_metric("output_confidence", output.confidence)

if output.confidence < 0.5 or latency > 2.0:

send_alert("Potential hallucination detected", details=output.text)

return outputIn real-world workflows, these hooks integrate with orchestration platforms or CI/CD pipelines. Observability data can feed dashboards showing trends over time or trigger automated rollback of suspect model versions. The key is embedding these checks so tightly that hallucination detection becomes a native part of your AI lifecycle, not an afterthought.

Frequently Asked Questions

How does observability reduce hallucination risks in AI?

Observability provides continuous insight into your AI system’s internal states and outputs. By monitoring key signals and metrics in real time, it helps detect unusual patterns that often precede or indicate hallucinations. This early warning lets you intervene faster, reducing the risk of faulty or misleading AI responses slipping into production.

What tools support automated hallucination detection?

There isn’t a one-size-fits-all tool yet, but many observability platforms can integrate with AI pipelines to collect and analyze relevant data. These include monitoring frameworks that track model confidence, output consistency, and input anomalies. Custom hooks and alerting systems built on top of orchestration or CI/CD tools are common ways to automate detection workflows.

Can observability catch all types of AI hallucinations?

No. While observability improves detection, hallucinations vary widely in form and subtlety. Some may evade automated checks, especially if they look plausible or stem from complex reasoning errors. Observability is a powerful layer of defense but works best combined with human review, robust testing, and ongoing model refinement.