Why Real-Time AI Monitoring Dashboards Are Non-Negotiable in 2026

Imagine missing a critical AI failure that costs millions in lost revenue before anyone notices. In 2026, that risk is unacceptable. Real-time AI monitoring dashboards catch failures instantly, giving you the power to act before damage spreads. They don’t just alert you, they enable continuous learning by feeding fresh data back into models, keeping them sharp and aligned with evolving conditions.

These dashboards are the backbone of automated decision-making at scale. By integrating telemetry data with generative AI-powered visualizations, they transform raw metrics into actionable insights. This means workflows optimize themselves, and compliance with regulations happens seamlessly, without manual intervention. According to IBM, AI-driven observability tools in 2026 will automate decisions and optimize workflows using machine learning insights, making your AI systems smarter and more reliable every minute. Meanwhile, Stanford experts predict that high-frequency AI economic dashboards will track AI’s real-world impact on productivity and workforce dynamics in real time, using diverse data sources like payroll and platform usage. This level of granularity demands dashboards that operate live, not in batches. Without them, you’re flying blind in a fast-moving AI landscape. Real-time monitoring isn’t a luxury anymore, it’s the foundation for maximizing AI’s business impact and staying competitive.

Stanford AI Experts Predict What Will Happen in 2026

Why Real-Time Data Will Define 2025 - Striim

Observability Trends 2026 - IBM

Top AI Observability Tools for Real-Time Model Performance Tracking in 2026

Picking the right platform to monitor your AI models in real time is critical. Two leaders stand out: Arize AI and Domo. Both offer live dashboards, but their strengths differ in focus and integration style.

| Feature | Arize AI | Domo |

|---|---|---|

| Real-Time Dashboards | Yes, with detailed model prediction tracking | Yes, cloud-based with multi-source KPI monitoring |

| Drift Detection | Built-in, tracks data and concept drift | Available, integrated with AI-powered alerts |

| AI-Powered Alerts | Yes, tailored to model performance anomalies | Yes, monitors KPIs and triggers alerts in near real time |

| Data Source Integration | Focused on ML pipelines and model telemetry | Broad, supports diverse business data sources |

| User Interface | Designed for ML engineers and data scientists | Business-friendly, customizable for executives |

| Use Case Focus | Model observability and troubleshooting | Enterprise-wide analytics and decision support |

Arize AI excels at model-centric observability, giving engineers granular insights into prediction quality and drift patterns. It’s built specifically for AI teams who need to diagnose and fix issues fast. Domo, on the other hand, shines in business-wide monitoring, blending AI alerts with operational KPIs across departments. Its cloud platform suits organizations wanting a unified view of AI impact alongside other metrics.

Both tools leverage AI to surface anomalies and reduce alert fatigue, but your choice depends on whether you prioritize deep model diagnostics or broad enterprise visibility. For a deep dive into how teams overcome AI observability challenges, check out AI Observability: How 1,340 Teams Overcame Barriers.

The 17 Best AI Observability Tools In December 2025 - Monte Carlo

13 Best AI Analytics Tools For Real-Time Data I Tested in 2025

5 Essential Features Your Real-Time AI Monitoring Dashboard Must Have

-

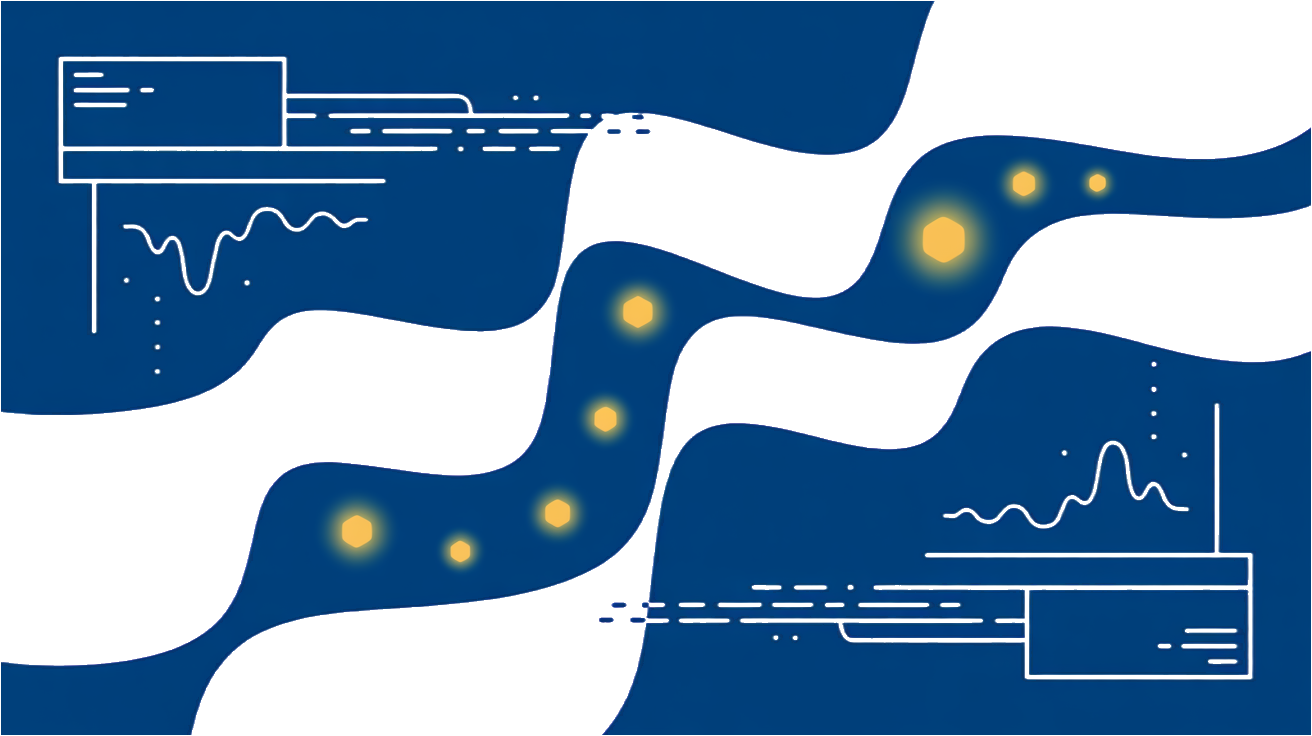

Drift Detection with Instant Alerts

Model drift is the silent killer of AI reliability. Your dashboard must track shifts in input data and prediction distributions in real time. When drift crosses critical thresholds, instant alerts should trigger so your team can act before performance tanks. Tools like Arize AI excel here, offering live dashboards that visualize drift alongside prediction accuracy and data flow metrics Monte Carlo. -

Performance Metrics Tailored to Business KPIs

Raw accuracy numbers don’t cut it. Your dashboard needs customizable metrics that align with your business goals, think conversion rates, false positive costs, or customer churn impact. This ensures your AI’s health is measured in terms that matter to stakeholders, not just data scientists. -

Generative AI-Powered Data Visualization

Static charts are yesterday’s news. The next-gen dashboards integrate generative AI to create dynamic, context-aware visualizations. This means your dashboard can automatically surface anomalies, suggest root causes, or generate narrative summaries that make insights actionable without manual digging IBM. -

Telemetry-Driven Automated Decision Support

Real-time telemetry data should feed automated workflows that recommend or even trigger remediation steps. Whether it’s retraining a model or adjusting thresholds, your dashboard should reduce manual firefighting by embedding AI-driven observability that optimizes itself continuously IBM. -

Seamless Integration with Data Pipelines and Model Repositories

Your dashboard must connect effortlessly to your existing data infrastructure and model registries. This ensures end-to-end visibility from raw data ingestion through model deployment and feedback loops. Without this, you risk blind spots that undermine your monitoring efforts.

These features turn your dashboard from a passive display into a strategic tool that keeps your AI models reliable, performant, and aligned with business priorities.

Implementing Real-Time AI Dashboards: Code Snippet and Best Practices

Getting your real-time AI dashboard off the ground means stitching together streaming data, model metrics, and alerting mechanisms into a seamless workflow. Start by ingesting live data streams using a lightweight event processing framework or message broker. This feeds directly into your model inference pipeline, which outputs performance metrics like latency, accuracy, or drift scores in real time. These metrics then flow into a time-series database or monitoring backend that your dashboard queries continuously.

Here’s a minimal Python example using a streaming framework and a simple alert trigger:

from streaming_framework import StreamConsumer

from monitoring_backend import MetricsStore, AlertManager

metrics_store = MetricsStore()

alert_manager = AlertManager(threshold=0.1) # alert if drift > 0.1

def process_event(event):

prediction = model.predict(event.data)

drift_score = calculate_drift(event.data, prediction)

metrics_store.record('drift_score', drift_score)

if drift_score > alert_manager.threshold:

alert_manager.send_alert(f'Drift alert: {drift_score}')

consumer = StreamConsumer(topic='model_predictions', callback=process_event)

consumer.start()This snippet highlights continuous metric recording and threshold-based alerting. In production, you’ll want to add batching, error handling, and integrate with your existing logging and incident management tools.

A few best practices to keep your dashboard effective:

- Avoid data lag by optimizing your streaming pipeline and database writes.

- Normalize metrics to compare across models or versions easily.

- Set realistic alert thresholds to prevent alert fatigue.

- Visualize trends, not just snapshots, so you catch gradual drifts early.

- Test your alerting logic with synthetic data to ensure reliability before going live.

Building this pipeline is iterative. Start simple, then layer in complexity as your monitoring needs evolve.

Frequently Asked Questions

How do real-time dashboards detect AI model drift effectively?

Real-time dashboards catch model drift by continuously tracking key performance metrics and input data distributions. They highlight deviations from historical baselines or expected ranges, signaling when the model’s behavior changes. By visualizing trends over time rather than isolated snapshots, these dashboards help you spot gradual shifts before they impact outcomes. Alerts based on these patterns ensure you act fast, keeping your AI aligned with reality.

What metrics should I prioritize for AI model monitoring?

Focus on metrics that reflect both model accuracy and input data quality. Common choices include prediction confidence, error rates, and feature distribution statistics. Also track latency and resource usage to ensure performance stays smooth. Prioritizing metrics depends on your business goals, whether it’s minimizing false positives, maintaining fairness, or optimizing response times. The right mix gives you a clear picture of model health and operational impact.

Can generative AI improve dashboard visualization and alerts?

Generative AI can enhance dashboards by automatically summarizing complex data patterns and generating natural language explanations for anomalies. It helps create dynamic visualizations tailored to user needs and can suggest alert thresholds based on learned patterns. While promising, it’s best used as a complement to human expertise, not a replacement. Generative AI can reduce noise and surface insights faster, but you still need solid monitoring foundations to trust its outputs.