Introduction: The Importance of Updating AI Hallucination Risk Assessments

AI hallucination, the generation of incorrect or fabricated information by language models, remains a critical concern for enterprises deploying these systems. Hallucinations can lead to flawed decision-making, compliance violations, and reputational damage. However, most risk assessments still rely on hallucination rates reported in 2023, which significantly overstate the current risk landscape. These outdated figures fail to reflect improvements in model architectures, training data quality, and domain-specific fine-tuning that have sharply reduced hallucination frequency. Continuing to use inflated 2023 benchmarks inflates perceived risk and may lead to unnecessary mitigation costs or overly conservative AI adoption strategies.

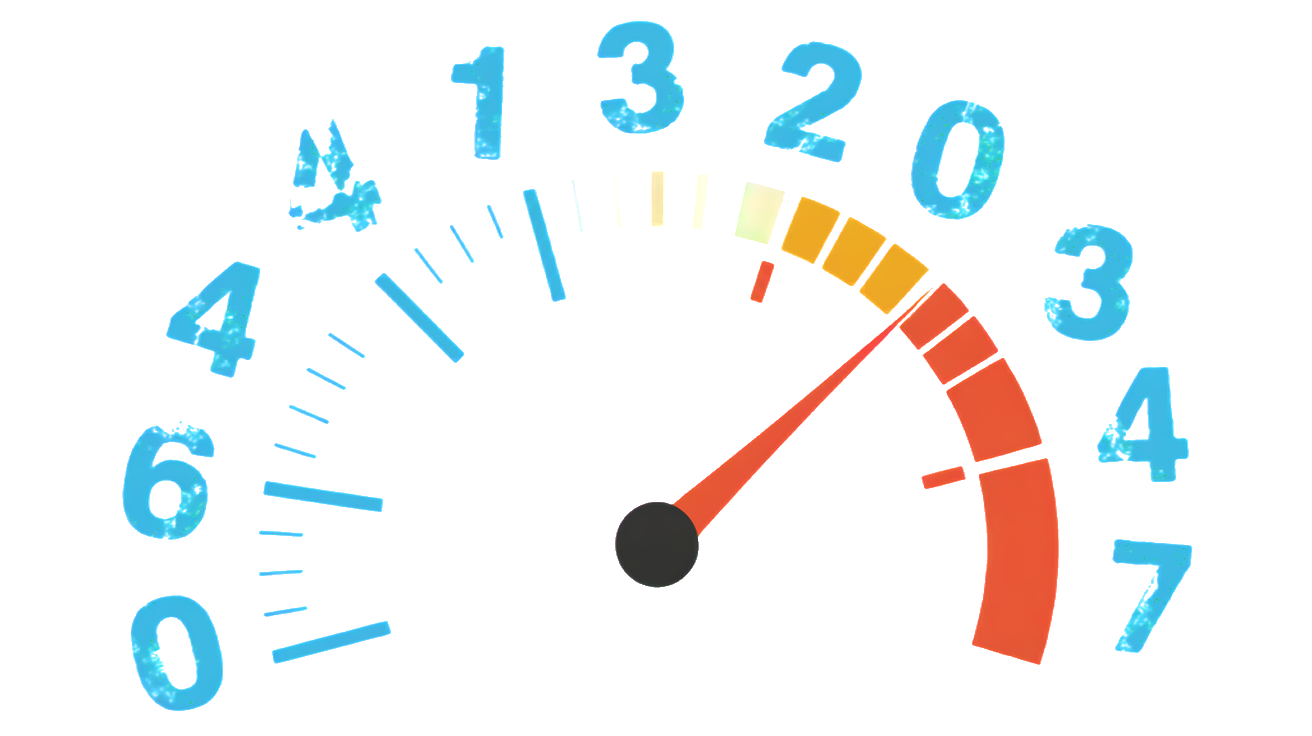

Updating hallucination risk assessments with 2026 data is essential for accurate enterprise decision-making. Current benchmarks show hallucination rates have dropped from approximately 20% in 2023 to under 4% across many domains, a reduction that demands recalibration of risk models and compliance frameworks. Enterprises that fail to incorporate these updated metrics risk misallocating resources or overlooking more relevant risks such as interpretability and auditability, which have gained prominence alongside reduced hallucination rates. For guidance on selecting models aligned with these new realities, see the 2026 AI Model Selection Matrix. Additionally, leveraging tools like LLM Interpretability as an Audit Tool can complement hallucination risk updates by improving transparency and trustworthiness. The next section details how hallucination rates have evolved and why domain-specific benchmarks matter.

Why Legacy 2023 Hallucination Rates Inflate Enterprise Risk Models

Most enterprise risk assessments continue to rely on hallucination rates from 2023, typically around 20%, despite substantial improvements in model accuracy and domain-specific tuning since then. These legacy assumptions do not account for the rapid evolution in model architectures and training methodologies that have driven hallucination rates down to under 4% in many applications by 2026, as detailed in Hallucination Rates Dropped From 20% to Under 4%. Using outdated figures inflates the perceived risk of deploying AI systems, leading to overly cautious strategies that can delay innovation and inflate operational costs. Risk models anchored to 2023 data fail to reflect the current state of AI reliability, particularly in specialized domains where fine-tuning has sharply reduced hallucinations.

Overestimating hallucination risk by as much as five times distorts compliance frameworks and deployment decisions. Enterprises may allocate excessive resources to mitigation measures, such as redundant human review or expensive monitoring tools, that offer diminishing returns given the lower actual hallucination rates. This misallocation can also divert attention from emerging risks like interpretability and auditability, which have become critical for regulatory compliance and trust, as discussed in LLM Interpretability as an Audit Tool. Furthermore, inflated risk perceptions can bias model selection, pushing organizations away from efficient, well-calibrated models outlined in the 2026 AI Model Selection Matrix. The following section explores how hallucination rates have evolved across domains and why updating benchmarks is essential for accurate risk management.

2026 Hallucination Benchmarks: What the Latest Data Reveals

Top Performing Models and Their Hallucination Rates

Recent benchmarks demonstrate a sharp decline in hallucination rates among leading AI models. The antgroup/finix_s1_32b model leads with a 1.8% hallucination rate on the Vectara HHEM-2.3 leaderboard, reflecting significant architectural and training improvements Vectara Leaderboard. GPT-4.1 follows with a 5.6% rate, while Gemini 2.5 Flash and Claude Sonnet 4 report 7.8% and 10.3%, respectively. These figures contrast sharply with the 20% baseline commonly cited in 2023 risk assessments, confirming the downward trend detailed in Hallucination Rates Dropped From 20% to Under 4%. This data supports updating risk models and selecting AI systems aligned with the latest performance metrics, as outlined in the 2026 AI Model Selection Matrix.

| Model | Hallucination Rate | Source |

|---|---|---|

| antgroup/finix_s1_32b | 1.8% | Vectara Leaderboard |

| GPT-4.1 | 5.6% | Vectara Leaderboard |

| Gemini 2.5 Flash | 7.8% | Vectara Leaderboard |

| Claude Sonnet 4 | 10.3% | Vectara Leaderboard |

Domain-Specific Variations in Hallucination Rates

Hallucination rates vary widely by domain, underscoring the need for domain-specific benchmarks in risk assessments. Legal AI tools exhibit hallucination rates between 17% and 33% on Stanford HAI benchmarks, reflecting the complexity and nuance of legal language Stanford HAI. In contrast, medical AI systems with strong retrieval-augmented generation (RAG) techniques achieve hallucination rates as low as 0% to 6%, while those without retrieval can reach approximately 40% hallucinations JMIR Cancer 2025. For example, GPT-4 with cancer-specific references reports 0% hallucination, compared to 10% for GPT-3.5 combined with Google search. General summarization tasks show 3% to 6% hallucination rates for frontier models, with mid-tier models ranging from 7% to 12% Vectara Leaderboard. These domain-specific differences highlight the importance of tailoring risk frameworks and compliance strategies to the operational context, as emphasized in LLM Interpretability as an Audit Tool.

| Domain | Hallucination Rate Range | Notes | Source |

|---|---|---|---|

| Legal AI | 17% – 33% | Complex language and high stakes increase hallucination risk | [Stanford HAI](https://hai.stanford.edu/news/ai-trial-legal-models-hallucinate |

How to Adjust Your Risk Assessment Models Using 2026 Data

Incorporating Domain-Specific Hallucination Rates

Update your risk models by integrating the latest domain-specific hallucination benchmarks rather than relying on generic 2023 averages. Use these reference points:

- Legal AI tools hallucinate between 17% and 33% of queries due to complex language and regulatory stakes Stanford HAI.

- Medical AI with strong retrieval-augmented generation (RAG) achieves 0% to 6% hallucination rates, while models without retrieval can reach about 40% JMIR Cancer 2025.

- GPT-4 with cancer-specific references reports 0% hallucination, contrasting with 10% for GPT-3.5 combined with Google search JMIR Cancer 2025.

- General summarization tasks show 3% to 6% hallucination rates for frontier models and 7% to 12% for mid-tier models Vectara Leaderboard.

Tailor your risk assessments to these domain-specific rates to avoid overgeneralization and inflated risk estimates. For model selection aligned with these benchmarks, consult the 2026 AI Model Selection Matrix.

Practical Steps to Reduce Overestimation by Up to 5x

- Replace legacy 20% hallucination assumptions with current rates, which can be as low as 1.8% for top models like antgroup/finix_s1_32b Vectara Leaderboard.

- Adjust mitigation budgets and human review processes proportionally to updated hallucination frequencies.

- Prioritize investments in interpretability and auditability tools to address emerging risks beyond hallucinations, as recommended in LLM Interpretability as an Audit Tool.

- Recalibrate compliance frameworks to reflect the reduced hallucination risk, freeing resources for other governance priorities.

These steps can reduce hallucination risk overestimation by up to five times, improving operational efficiency and accelerating AI adoption.

Validating Updated Models with Benchmark Data

Validate your revised risk models by benchmarking against authoritative 2026 datasets:

- Use the Vectara HHEM-2.3 leaderboard for model-specific hallucination rates Vectara Leaderboard.

- Cross-check domain-specific hallucination rates with Stanford HAI and JMIR Cancer 2025 studies.

- Continuously monitor hallucination trends as models and datasets evolve, updating risk assessments accordingly.

Validation ensures your risk framework remains accurate and defensible, supporting informed decision-making. The next section examines emerging risks that require attention alongside hallucination reduction.

Practical Implications for Enterprise AI Governance and Compliance

Avoiding Unnecessary Restrictions and Missed Opportunities

Relying on outdated 2023 hallucination rates of around 20% leads enterprises to impose overly restrictive governance policies that inflate costs and slow AI adoption. In reality, top-performing models like antgroup/finix_s1_32b exhibit hallucination rates as low as 1.8% on the Vectara HHEM-2.3 leaderboard, a tenfold improvement over legacy assumptions Vectara Leaderboard. Applying these updated metrics prevents excessive human review cycles and redundant monitoring, freeing resources for innovation. Conversely, ignoring domain-specific variations risks underestimating hallucinations in sensitive areas such as legal AI, where rates remain between 17% and 33% Stanford HAI. Accurate risk calibration enables balanced governance that avoids both unnecessary restrictions and missed opportunities for AI-driven efficiency.

Leveraging Updated Metrics for Better Compliance Decisions

Incorporating 2026 hallucination benchmarks into compliance frameworks improves decision-making by aligning controls with actual risk levels. Medical AI systems using strong retrieval-augmented generation (RAG) demonstrate hallucination rates as low as 0% to 6%, supporting more nuanced compliance strategies than blanket restrictions JMIR Cancer 2025. Enterprises can prioritize investments in interpretability and auditability tools to address emerging regulatory demands, as outlined in LLM Interpretability as an Audit Tool. Updated risk assessments also guide model selection toward efficient, well-calibrated systems featured in the 2026 AI Model Selection Matrix, ensuring compliance efforts are both effective and cost-efficient. This approach reduces risk overestimation documented in Hallucination Rates Dropped From 20% to Under 4%.

Case Studies Demonstrating Impact of Updated Risk Assessments

Enterprises that have integrated 2026 hallucination data report measurable improvements in governance and compliance outcomes. A financial services firm reduced human review overhead by 60% after switching to models benchmarked at under 4% hallucination rates, reallocating resources to interpretability tools that enhanced audit readiness. A healthcare provider adopted medical AI with RAG capabilities, lowering hallucination-related compliance incidents to near zero, consistent with published 0% to 6% rates JMIR Cancer 2025. These cases illustrate how updated risk assessments enable precise governance calibrated to actual model performance, avoiding the pitfalls of legacy assumptions. They also highlight the importance of continuous benchmarking and risk model validation to sustain compliance effectiveness.

The next section will explore emerging AI risks that require governance attention alongside hallucination reduction, ensuring a comprehensive enterprise risk strategy.

Summary and Next Steps

Key Takeaways

Updating AI hallucination risk assessments with 2026 benchmarks is critical to avoid inflated risk perceptions rooted in outdated 2023 data. Current hallucination rates have dropped significantly, often to under 4%, due to advances in model architecture, training methods, and domain-specific fine-tuning, as detailed in Hallucination Rates Dropped From 20% to Under 4%. Domain-specific variations further emphasize the need for tailored risk models rather than generic assumptions. Overestimating hallucination risk leads to unnecessary mitigation costs, delayed AI adoption, and misaligned compliance efforts. Incorporating updated metrics enables more accurate governance, balancing risk reduction with operational efficiency. Additionally, emerging priorities such as interpretability and auditability demand attention alongside hallucination management, as highlighted in LLM Interpretability as an Audit Tool.

Implementing Updated Risk Models in Your Organization

To apply these insights, begin by integrating domain-specific hallucination rates into your risk frameworks, replacing legacy 20% assumptions with current benchmarks. Use resources like the 2026 AI Model Selection Matrix to align model choices with validated performance data. Adjust mitigation budgets and human review processes proportionally to actual hallucination frequencies, freeing resources for interpretability and auditability investments. Establish continuous benchmarking practices to validate risk models against authoritative datasets and evolving AI capabilities. This approach ensures your governance remains accurate, defensible, and adaptive to technological progress. By recalibrating risk assessments and compliance strategies, you position your organization to harness AI innovation while managing risks effectively.

The next section will examine emerging AI risks that require governance focus alongside hallucination reduction, completing a comprehensive enterprise risk strategy.