Explainable AI and Privacy by Design: Avoid €35M GDPR Fines

Imagine facing a €35 million fine or 7% of your global turnover because your AI system made decisions no one can explain. That’s the reality under the upcoming EU AI Act, fully enforced for high-risk AI by August 2026. The stakes are sky-high, and explainability isn’t just a buzzword, it’s your legal shield and a trust builder for users GDPR Enforcement Trends: €7.1 Billion in Fines and Rising.

Why Explainability Matters for GDPR

GDPR demands transparency. When AI impacts user rights, like credit scoring or job screening, affected individuals must understand how decisions are made. This isn’t optional. Explainable AI means providing clear, understandable reasons behind automated decisions. It also helps you spot and fix bias before it spirals into discrimination. Without this, you risk violating GDPR’s fairness and accountability principles, triggering costly investigations and penalties GDPR Compliance in 2024: How AI and LLMs impact European user rights - Workstreet.

Embedding Privacy by Design in AI Pipelines

Privacy by design is your blueprint for GDPR compliance baked into every AI development stage. This means minimizing data collection, anonymizing inputs, and continuously monitoring models for privacy risks. It’s not a checkbox but a mindset shift, integrating privacy controls from data ingestion to model deployment. When privacy is embedded early, you reduce attack surfaces and build systems resilient to regulatory scrutiny. This proactive approach aligns with GDPR’s core, turning compliance from a reactive headache into a competitive advantage.

Explainability and privacy by design are your frontline defenses against massive GDPR penalties and user rights violations. Ignore them at your peril.

Implementing Privacy Enhancing Technologies: 60% Adoption by 2025

Privacy Enhancing Technologies (PETs) are no longer optional. Over 60% of companies plan to deploy PETs like masking, differential privacy, and federated learning at scale by the end of 2025. These tools are becoming the backbone of privacy engineering in AI, enabling you to build systems that protect user data without sacrificing performance or utility AI Data Privacy Statistics & Trends 2025.

PETs help you engineer privacy directly into your AI pipelines. They reduce exposure to sensitive data and limit the risk of breaches or regulatory violations. Here’s a quick rundown of the top PETs transforming AI privacy today:

| Privacy Enhancing Technology | How It Works | GDPR Compliance Benefit |

|---|---|---|

| Data Masking | Obscures sensitive data fields in datasets, replacing them with realistic but fake values. | Minimizes personal data exposure during training and testing, reducing breach risk. |

| Differential Privacy | Adds statistical noise to datasets or query results, preventing identification of individuals. | Ensures data subjects’ anonymity, supporting GDPR’s data minimization and privacy principles. |

| Federated Learning | Trains AI models locally on user devices or edge nodes, sharing only model updates, not raw data. | Keeps personal data decentralized, limiting data transfers and enhancing user control. |

Integrating PETs into your AI development lifecycle aligns with GDPR’s privacy by design mandate. They complement explainability and risk assessments by proactively reducing data sensitivity. This layered approach not only lowers your compliance risk but also builds user trust in your AI systems. For a deeper dive into embedding privacy controls early, see EU AI Act Enforcement Starts in August 2026.

PETs are your best bet to stay ahead of evolving regulations and avoid the costly fines that come with data misuse. Don’t wait for enforcement to catch up, start embedding these technologies now.

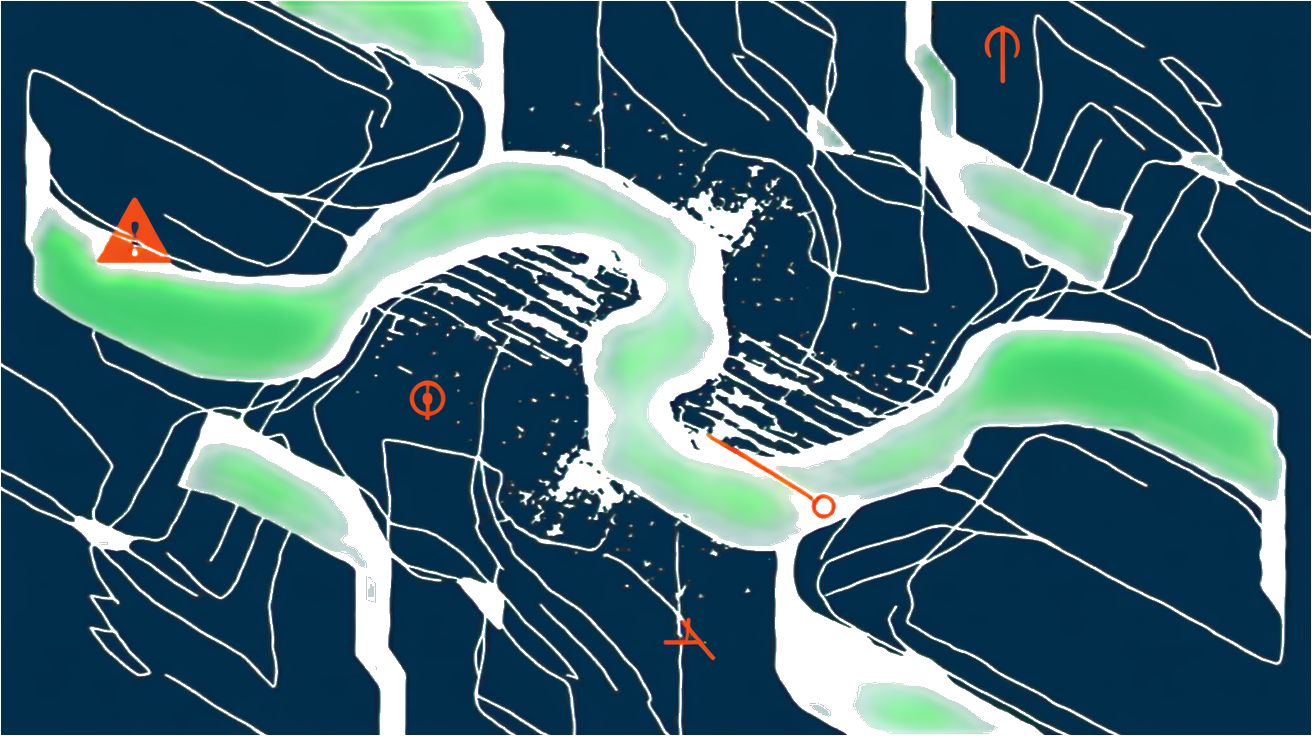

23 GDPR Risks in AI Data Sharing: Network Requests and Cookies

When your AI system shares data, network requests and cookies are the silent GDPR traps waiting to spring. A recent study found that non-compliant websites face a median of 23 GDPR risks, with 18 linked to network requests and 1 tied directly to third-party cookies Privado AI. These numbers aren’t just statistics, they highlight how easily AI data flows can violate GDPR without tight controls.

Common pitfalls include sending user data to unknown or unvetted endpoints, failing to restrict data sharing to necessary parties, and neglecting cookie consent management. AI models often rely on external APIs or cloud services, which can trigger uncontrolled network requests. Each request is a potential leak point for personal data. Meanwhile, third-party cookies can silently track users across platforms, violating GDPR’s strict consent and transparency requirements.

Common Pitfalls in AI Data Flows

- Unrestricted outbound network requests that expose personal data to third parties without explicit user consent.

- Lack of granular control over which data elements are shared and with whom.

- Insufficient logging and auditing of data transmissions, making compliance verification impossible.

- Ignoring cookie consent frameworks, leading to unauthorized tracking and profiling.

- Embedding third-party scripts that initiate hidden data flows outside your control.

Mitigating Risks from Third-Party Trackers

- Implement strict allowlists for network endpoints your AI can contact.

- Use Privacy Enhancing Technologies (PETs) like data minimization and anonymization before data leaves your system.

- Enforce cookie consent banners that comply with GDPR’s transparency and opt-in requirements.

- Regularly audit and update third-party integrations to ensure they meet GDPR standards.

- Monitor network traffic for unexpected or unauthorized data flows.

Ignoring these risks is a fast track to GDPR fines and user distrust. Control your AI’s data sharing like your compliance depends on it, because it does.

Next up: why 90% of data breaches stem from human error and how training plus a strong DPO role can save your skin.

90% of Data Breaches Stem from Human Error: Training and DPO Role

Why Staff Training is Non-Negotiable

Human error causes 90% of data breaches. That’s not a statistic you can ignore when building GDPR-compliant AI systems. Your best algorithms and Privacy Enhancing Technologies won’t save you if your team mishandles data or overlooks critical compliance steps. Regular, targeted staff training is your frontline defense. It keeps everyone sharp on GDPR’s evolving requirements and the specific risks AI projects introduce. Training isn’t a one-off checkbox. It’s an ongoing process that adapts as your AI systems and regulatory landscape change. Without it, you’re leaving the door wide open for costly breaches and fines.

The Data Protection Officer’s Role in AI Projects

A dedicated Data Protection Officer (DPO) is more than a compliance figurehead. They’re your AI project’s guardian against GDPR pitfalls. The DPO ensures privacy by design principles are embedded from the start and monitors data processing activities continuously. They act as the bridge between your technical teams, legal advisors, and regulators. This role is crucial for interpreting complex AI behaviors through a GDPR lens and for managing incident response when things go wrong. Appointing a knowledgeable DPO isn’t optional, it’s a GDPR requirement for many organizations and a strategic move to prevent breaches that stem from human oversight.

Ongoing training combined with a strong DPO presence creates a culture of accountability and vigilance. This duo is your best bet to keep AI projects compliant and avoid the €35 million fines that come with data breaches.

How AI GDPR Will Shape Privacy Trends in 2025

Frequently Asked Questions

How can AI engineers implement privacy by design for GDPR?

Start by embedding data minimization into your AI workflows. Collect only what’s necessary and anonymize data whenever possible. Use privacy impact assessments early in development to identify risks. Automate consent management and ensure data subjects can exercise their rights easily. Collaborate closely with your Data Protection Officer to align technical controls with legal requirements.

What are the best Privacy Enhancing Technologies for AI pipelines?

Look for tools that support differential privacy, homomorphic encryption, and secure multi-party computation. These help process data without exposing personal details. Data masking and federated learning also reduce risk by keeping sensitive data local. Choose technologies that integrate seamlessly with your existing stack and scale with your AI models.

How does explainability impact GDPR compliance in automated decisions?

Explainability is crucial because GDPR mandates transparency in automated decision-making. Your AI must provide clear, understandable reasons for decisions affecting individuals. This builds trust and enables users to challenge outcomes. Use model-agnostic explanation tools or inherently interpretable models to meet this requirement without sacrificing performance.