Why 44% of Companies Are Racing to Add AI Testing Now

Manual QA is a bottleneck nobody talks about enough. It drags down release velocity, buries bugs in layers of human error, and inflates costs with repetitive, tedious tasks. Even the best testers can’t keep up with the pace modern software demands. That’s why 44 percent of companies have already integrated AI into their QA processes, with another 19 percent planning to jump on board soon AI in Test Automation Market Size | CAGR of 19%.

The hidden costs of manual QA are more than just time. They include missed defects, inconsistent test coverage, and burnout. AI testing frameworks tackle these head-on by automating test generation, execution, and analysis. This slashes test cycles dramatically. Teams report more than doubling their productivity and cutting false positives, which means less time chasing phantom bugs and more time shipping features. In fact, 78 percent of software testers now use AI to boost their productivity 30 Essential AI in Quality Assurance Statistics [2024] - QA.tech.

Here’s how AI testing frameworks change the game:

- Accelerate test cycles by automating repetitive tasks and running tests in parallel

- Improve accuracy by reducing human error and false positives

- Enhance coverage with AI-driven test case generation that adapts to code changes

- Free up testers to focus on exploratory and high-value testing

- Scale effortlessly as your codebase and release cadence grow

The market for AI-enabled testing tools is booming, expected to hit $2 billion by 2033 30 Essential AI in Quality Assurance Statistics [2024] - QA.tech. The integration of machine learning in test automation has more than doubled since last year Top 30+ Test Automation Statistics in 2025 - Testlio. If you’re not moving fast on AI testing, you’re already behind.

Choosing the Right AI Testing Framework: What Matters Most

Picking an AI testing framework isn’t just about flashy features. It’s about how well it fits your existing setup and scales with your team. The wrong choice means costly rewrites, frustrated developers, and stalled pipelines. Focus on three critical factors: integration, language and platform support, and automation capabilities.

Integration with Your Existing Pipeline

Your AI testing tool must slide smoothly into your current CI/CD workflow. Look for frameworks that support popular CI servers like Jenkins, GitLab CI, or GitHub Actions without heavy customization. Integration with version control, issue trackers, and notification systems is a must to keep feedback loops tight. Tools like Katalon Studio shine here, offering AI-powered visual testing that plugs into existing workflows and fosters cross-team collaboration Top 10 AI Testing Tools Transforming Quality Assurance in 2024.

| Integration Aspect | Why It Matters | Example Features |

|---|---|---|

| CI/CD Compatibility | Automate test runs on every commit | Jenkins, GitLab, GitHub Actions |

| VCS & Issue Tracker Sync | Trace failures to code and tickets | Git, Jira, Azure DevOps |

| Notification Hooks | Fast feedback to developers | Slack, email, MS Teams |

Language and Platform Support

Your stack is unique. The AI testing framework must cover your programming languages, frontend frameworks, and target platforms. Whether you’re running React, Angular, or backend Java, Python, or Go, broad language support avoids bottlenecks. Also, ensure compatibility with browsers and OSes your users rely on. Modern AI tools offer robust multi-language and cross-platform support, managing test cases and reports seamlessly across environments Top 18 AI-Powered Software Testing Tools in 2024 - Code Intelligence.

Automation and Analytics Features

Automation is the heart of AI testing. Prioritize frameworks that generate adaptive test cases, execute tests in parallel, and analyze results with AI-driven insights. This reduces false positives and highlights real risks faster. Look for built-in analytics dashboards that track coverage, flakiness, and test health over time. These features free your team from manual drudgery and empower smarter decisions.

- AI-driven test generation adapting to code changes

- Parallel execution to speed up test cycles

- Intelligent failure analysis reducing noise

- Visual and data-driven analytics dashboards

Choosing wisely here sets the stage for faster QA and smoother releases. Next up: 3 Clear Steps

3 Clear Steps to Embed AI Testing into Your CI/CD Workflow

-

Audit Your Current Pipeline

Start by mapping out your existing CI/CD workflow. Identify bottlenecks where manual testing slows releases or causes flaky results. Look for gaps in test coverage and areas with frequent false positives or negatives. This baseline helps you measure AI’s impact later. Don’t skip this step, it’s your reality check before adding AI complexity. Focus on integration points where AI-driven tools can plug in seamlessly, such as test generation or failure analysis stages. -

Select AI Tools with Predictive Analytics

Not all AI testing frameworks are created equal. Prioritize tools that offer predictive analytics to forecast test failures and optimize test suites dynamically. This capability reduces wasted cycles and surfaces critical issues earlier. IBM’s Watson AI expansion in 2024 highlights how predictive models can automate test case generation and failure triage, accelerating QA feedback loops AI in Test Automation Market Size | CAGR of 19%. Also, consider frameworks with strong integration APIs and support for parallel execution to maximize pipeline throughput. -

Automate, Monitor, and Iterate

Embed your chosen AI tools into the pipeline with automation scripts and triggers. But don’t treat this as a one-off project. Continuously monitor AI-driven test outcomes and pipeline health metrics. Use dashboards and alerts to catch anomalies early. AI models improve with feedback, feed them real-world results to reduce noise and false alarms over time. This iterative approach ensures your AI testing framework evolves alongside your codebase and team needs, keeping QA fast and reliable as adoption scales Top 30+ Test Automation Statistics in 2025 - Testlio.

Automate Test Case Generation: Sample Pipeline Snippet with IBM Watson

Integrating Watson AI into CI/CD

IBM Watson’s latest AI enhancements, announced in April 2024, focus on predictive analytics and automated test case generation to streamline QA workflows AI in Test Automation Market Size | CAGR of 19%. Embedding Watson into your CI/CD pipeline means your tests evolve with every commit. Instead of manually writing or updating test cases, Watson analyzes code changes and historical test data to suggest or generate relevant tests automatically. This reduces bottlenecks and accelerates feedback loops.

Here’s a simplified example of how you might integrate Watson’s AI-powered test generation into a Jenkins pipeline. The snippet triggers Watson’s API to generate test cases based on the latest code diff, then runs those tests as part of the build:

pipeline {

agent any

stages {

stage('Checkout') {

steps {

checkout scm

}

}

stage('Generate Tests with Watson AI') {

steps {

script {

def response = httpRequest(

httpMode: 'POST',

url: 'https://watson-ai.ibm.com/api/test-generation',

contentType: 'APPLICATION_JSON',

requestBody: """{

"repo": "${env.GIT_URL}",

"commit": "${env.GIT_COMMIT}"

}"""

)

writeFile file: 'generated_tests.json', text: response.content

}

}

}

stage('Run Generated Tests') {

steps {

sh 'run-tests --input generated_tests.json'

}

}

}

}Automating Predictive Test Runs

Watson’s AI doesn’t just generate tests. It predicts which tests are most likely to fail based on code changes and historical failure patterns. This predictive test execution prioritizes high-risk areas, slashing test suite runtime and focusing developer attention where it matters most. You avoid wasting cycles on low-impact tests while catching bugs earlier.

In practice, after Watson generates test cases, it ranks them by predicted failure probability. Your pipeline can then run the top-ranked tests first, optionally gating deployments on their success. This approach blends AI-driven insight with your existing test automation, making your QA pipeline smarter and faster without extra manual effort.

The payoff is clear: faster feedback, fewer missed bugs, and a QA process that scales with your team’s velocity. Integrating AI like Watson into your developer pipeline is no longer optional, it’s a competitive edge.

Frequently Asked Questions

What are the biggest challenges when integrating AI testing frameworks?

The toughest hurdles usually involve data quality and integration complexity. AI models need clean, relevant test data to learn effectively, which can be a bottleneck if your existing test suites are outdated or inconsistent. Plus, weaving AI tools into your current pipeline often requires custom connectors or scripts, especially if your CI/CD setup is bespoke or fragmented. Expect some upfront effort to align your workflows and retrain teams on new processes.

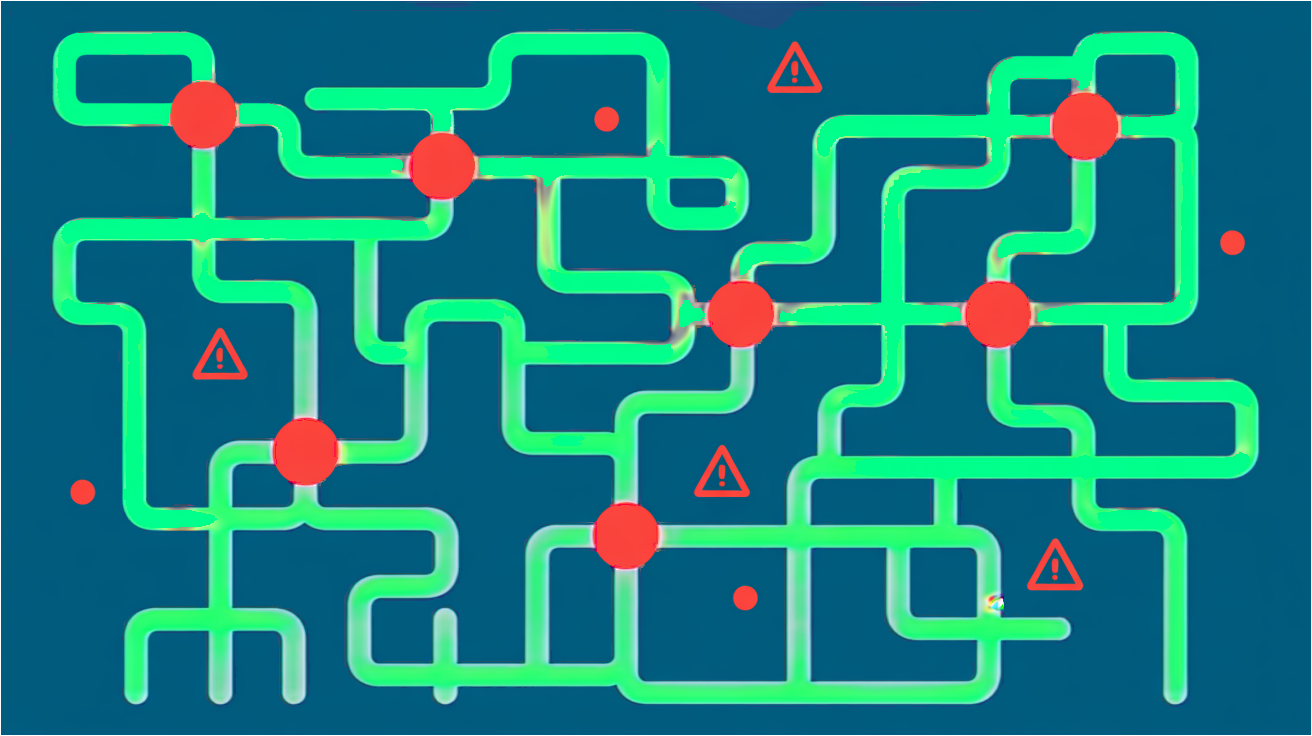

How do AI testing tools handle false positives and flaky tests?

AI testing frameworks reduce false positives by learning from historical test outcomes and adapting to environmental changes. They often use pattern recognition to distinguish real failures from flaky tests caused by timing issues or external dependencies. However, no system is perfect, continuous tuning and human oversight remain crucial to keep noise low and trust high in your automated results.

Can AI testing frameworks work with legacy CI/CD pipelines?

Yes, but with caveats. Most AI testing tools offer APIs or plugins that can be retrofitted into older pipelines, though the integration might not be seamless. Legacy systems may lack the hooks or telemetry data modern AI tools rely on, so expect some customization or partial adoption initially. The key is to start small, prove value, then expand AI testing coverage as you modernize your pipeline incrementally.