Why Traditional AI Testing Misses 70% of Real-World Failures

Imagine your AI model scores 98% accuracy in the lab but crashes silently when deployed. That’s not a glitch; it’s a systemic blind spot. Traditional AI testing zeroes in on metrics like accuracy, precision, and recall using static datasets. It’s a controlled environment where inputs and outputs are predictable. But real-world systems don’t operate in neat boxes.

These tests miss the tangled web of dependencies, infrastructure hiccups, and unexpected data shifts that AI faces in production. For example, a slight delay in data streaming or a subtle hardware fault can cascade into catastrophic failures. The AI might still output predictions, but those predictions could be dangerously wrong or inconsistent. This is the silent breakdown nobody sees until it’s too late. Traditional testing methods simply don’t simulate these complex failure modes or the dynamic conditions that AI systems encounter daily.

Moreover, offline metrics don’t capture how AI interacts with other components like APIs, databases, or user interfaces under stress. They ignore how latency, concurrency, or partial outages impact model performance. This gap leads to overconfidence in model robustness and blindsides teams when the AI behaves unpredictably in production. To truly understand AI resilience, you need to stress the entire system under realistic failure scenarios. That’s where chaos engineering steps in, forcing failure to expose hidden vulnerabilities before your users do.

5 Chaos Engineering Experiments Tailored for AI Systems

-

Model Latency Injection

Inject artificial delays into the AI model’s inference pipeline. This simulates network lag, overloaded GPUs, or throttled APIs. The goal is to observe how your system handles slow responses without timing out or returning stale predictions. Latency spikes can expose brittle retry logic or cascading failures in downstream services. If your AI degrades gracefully or triggers fallback mechanisms, you’ve built resilience. If not, you’ve found a critical weak spot. -

Data Pipeline Disruption

Interrupt or corrupt data streams feeding your AI models. This could mean dropping batches, shuffling timestamps, or injecting malformed records. AI systems rely heavily on clean, timely data. When the pipeline breaks, models might receive incomplete or misleading inputs, causing unpredictable outputs. This experiment reveals how well your system detects and recovers from data anomalies or outages. It also tests alerting and rollback procedures before bad data poisons your production environment. -

Adversarial Input Fuzzing

Feed your AI model with deliberately crafted, borderline inputs designed to confuse or mislead it. Unlike standard test sets, adversarial fuzzing probes for blind spots in model decision boundaries. This uncovers vulnerabilities to subtle perturbations or rare edge cases that traditional testing misses. It’s a proactive way to identify potential failure modes that could lead to hallucinations, bias, or security exploits. -

Infrastructure Resource Starvation

Simulate resource exhaustion scenarios like CPU throttling, memory leaks, or disk I/O bottlenecks on AI-serving nodes. AI workloads are resource-intensive and sensitive to infrastructure health. This test checks if your system can maintain throughput or degrade predictably under constrained conditions. It also surfaces hidden dependencies on cloud services or hardware accelerators that might fail silently. -

API Dependency Failures

Force failures in external APIs or microservices your AI depends on. This includes timeouts, error responses, or partial data returns. AI systems rarely operate in isolation; they interact with multiple services for data enrichment, feature extraction, or decision support. Testing these failure modes ensures your AI can handle partial outages gracefully without cascading errors or data corruption.

These experiments go beyond traditional testing by stressing the entire AI ecosystem. They reveal hidden vulnerabilities in model logic, data integrity, infrastructure, and integrations. If you want your AI to survive real-world chaos, start here. For more on why AI projects often fail before production, see Why Most AI Agent Projects Stall Before Production. And to understand how teams monitor AI under stress, check out [AI Observability: How 1,340 Teams Overcame Barriers]

Comparing Chaos Engineering Tools for AI Resilience Testing

Chaos engineering tools come in many flavors. Some focus on infrastructure faults like server crashes or network latency. Others extend into application-level disruptions. But AI systems demand more. You need tools that simulate GPU failures, model drift, and data pipeline corruption, scenarios unique to AI workloads.

Open-source chaos frameworks excel at basic fault injection and are highly customizable. They let you script failures in compute nodes, storage, or network layers. But they often lack built-in support for AI-specific conditions. Commercial platforms, by contrast, increasingly offer modules tailored for AI resilience testing. These include GPU fault simulation, synthetic data perturbations, and integration hooks for popular ML frameworks. The trade-off: commercial tools tend to be more user-friendly but come with licensing costs and potential vendor lock-in.

| Feature | Open-Source Chaos Tools | Commercial Chaos Platforms |

|---|---|---|

| Infrastructure Fault Injection | Strong support (CPU, network) | Strong support + AI hardware faults |

| AI Model Drift Simulation | Limited or requires custom code | Built-in or extensible modules |

| GPU Fault Injection | Rare, mostly experimental | Available in specialized offerings |

| Data Pipeline Corruption | Possible via scripting | Integrated with ML data workflows |

| Integration with ML Frameworks | Manual setup required | Native or plugin-based integration |

| Usability | High customization, steep learning curve | User-friendly, guided workflows |

| Cost | Free, community-supported | Paid, enterprise-grade support |

Choosing the right tool depends on your team’s expertise, budget, and AI system complexity. If your focus is on deep AI resilience testing, commercial platforms may save time and reduce risk. For teams with strong engineering resources, open-source tools provide flexibility and control.

Next up: how to embed chaos engineering directly into your AI deployment pipelines for continuous robustness validation.

Integrating Chaos Engineering into AI Deployment Pipelines

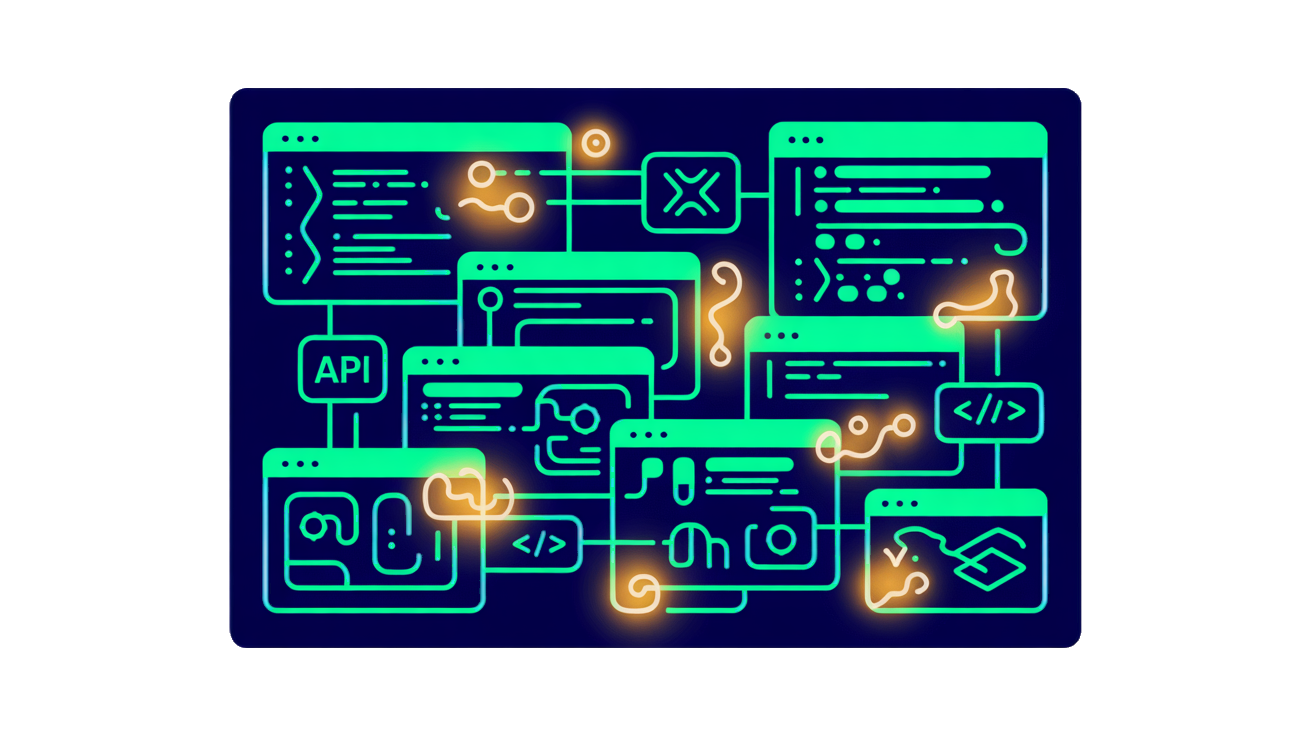

Chaos engineering isn’t a one-off stunt. It becomes truly powerful when embedded directly into your AI deployment pipelines. By integrating chaos experiments into your CI/CD workflows, you shift from reactive firefighting to proactive resilience validation. Every model update, infrastructure change, or configuration tweak triggers controlled fault injections and stress tests. This continuous validation ensures your AI system’s robustness is not just a checkbox before release but a living, evolving guarantee.

Embedding chaos tests into your pipelines also enables early detection of degradation. Instead of waiting for users to report failures or for monitoring alerts to go off, your pipeline surfaces subtle weaknesses during routine builds. This reduces downtime and costly rollbacks. Plus, coupling chaos experiments with your monitoring and alerting systems creates a feedback loop. You get real-time insights on how faults impact model predictions, latency, or data pipelines. This tight integration helps your team pinpoint vulnerabilities faster and prioritize fixes based on actual risk to system performance.

In short, chaos engineering becomes part of your AI system’s DNA. It moves beyond manual testing or isolated experiments and becomes a continuous, automated process that keeps your AI resilient in the face of real-world failures. This approach aligns with modern DevOps and MLOps practices, where automation and observability are key to managing complexity and uncertainty in AI deployments.

Frequently Asked Questions

How do I start chaos engineering for AI with limited resources?

Begin small and focus on high-impact failure scenarios relevant to your AI system. Use lightweight tools or scripts to simulate faults in data pipelines, model serving, or infrastructure. Automate these experiments gradually and integrate them into your CI/CD or MLOps workflows. The key is to prioritize experiments that reveal hidden weaknesses without requiring a full chaos platform upfront.

What metrics best indicate AI robustness during chaos tests?

Look beyond accuracy or loss. Focus on system-level metrics like latency, error rates, and throughput under stress. Also track AI-specific indicators such as prediction confidence, output consistency, and degradation patterns when inputs or infrastructure fail. Observability into both the AI model and its runtime environment is crucial to understand how chaos impacts overall reliability.

Can chaos engineering reduce AI hallucinations and unexpected outputs?

Chaos engineering can expose conditions that trigger hallucinations or erratic behavior by simulating real-world failures like corrupted inputs or partial model outages. By identifying these triggers early, you can improve model design, add fallback mechanisms, or enhance input validation. While it won’t eliminate hallucinations entirely, it helps build resilience against unexpected outputs in production.