78% of AI Data Assets Exposed by 2024 Demand Immediate Pipeline Defense

Imagine losing control of nearly four out of five AI data assets overnight. That’s the reality enterprises faced by 2024, with 78% of AI implementations suffering critical data exposure. The surge in AI security incidents, up a staggering 340% in 2024, has turned AI pipelines into prime targets for adversarial attacks and data breaches AI Security Incidents Surge 340% in 2024: A $45 Billion Problem. If your AI pipeline isn’t hardened now, you’re not just at risk, you’re already compromised.

The root cause? Lack of visibility into AI data flows. A shocking 89% of enterprises admit they can’t fully track how data moves through their AI systems. Without this insight, you’re flying blind, unable to detect unauthorized access or data poisoning. Worse, 67% of organizations reported unauthorized access to sensitive training datasets in the past year alone. This isn’t a minor glitch; it’s a systemic failure in governance and monitoring. If you can’t see the attack surface, you can’t defend it. The stakes have never been higher.

How Chain of Attack Techniques Evade Detection: Lessons for Defense

Semantic Tweaks That Fool AI Models

The Chain of Attack (CoA) technique is a masterclass in subtlety. Instead of blunt-force adversarial noise, CoA applies step-by-step semantic modifications to input data, crafting images that look natural to humans but fool AI models with surgical precision. This approach achieves a 98.4% success rate on ViECap and 94.2% on Unidiffuser, two state-of-the-art vision models Adversarial AI in Late 2025: Current Attacks, Defenses, and Production Threats. These tweaks exploit the models’ blind spots, tiny shifts in color, texture, or context that don’t trigger traditional anomaly detectors but cause misclassification or data poisoning.

This stealthy manipulation means your AI pipeline’s usual defenses, threshold-based alerts, signature detection, or simple input validation, are often blind to the attack. The adversarial inputs blend into legitimate data streams, making detection a game of inches. Understanding these semantic tweaks is your first line of defense. If you don’t know how attackers morph data, you can’t build detectors that spot the difference.

Detection Gaps and What They Mean for Your Pipeline

Detection gaps arise because most AI security tools focus on syntactic anomalies, outliers in pixel values or metadata, rather than semantic consistency. CoA’s gradual, context-aware perturbations slip through these cracks. The result? High false negatives and a false sense of security. Your pipeline might flag noisy inputs but miss these carefully crafted adversarial samples entirely.

Here’s what that means for your AI pipeline:

| Detection Aspect | Traditional Approach | CoA Exploitation | Impact on Pipeline |

|---|---|---|---|

| Input Validation | Thresholds on pixel-level anomalies | Semantic tweaks evade thresholds | Undetected adversarial inputs enter |

| Anomaly Detection |

3 Core Defense Pillars: Synthetic Data Governance, Pipeline Separation, and Provenance

Declare and Validate Synthetic Data Usage

Synthetic data is a double-edged sword. It boosts training diversity but also expands your attack surface. The first defense is declaration and validation. Every synthetic dataset must come with a clear, documented purpose. What problem does it solve? How was it generated? Without this, you’re flying blind. Validation means running synthetic data through rigorous quality checks, statistical similarity, absence of leakage, and adversarial robustness tests. This step weeds out poisoned or manipulated data before it ever touches your model. Treat synthetic data like a hazardous material: track it, test it, and never mix it casually with production data. Serious Insights confirms that declared and validated synthetic data reduces risk and improves auditability.

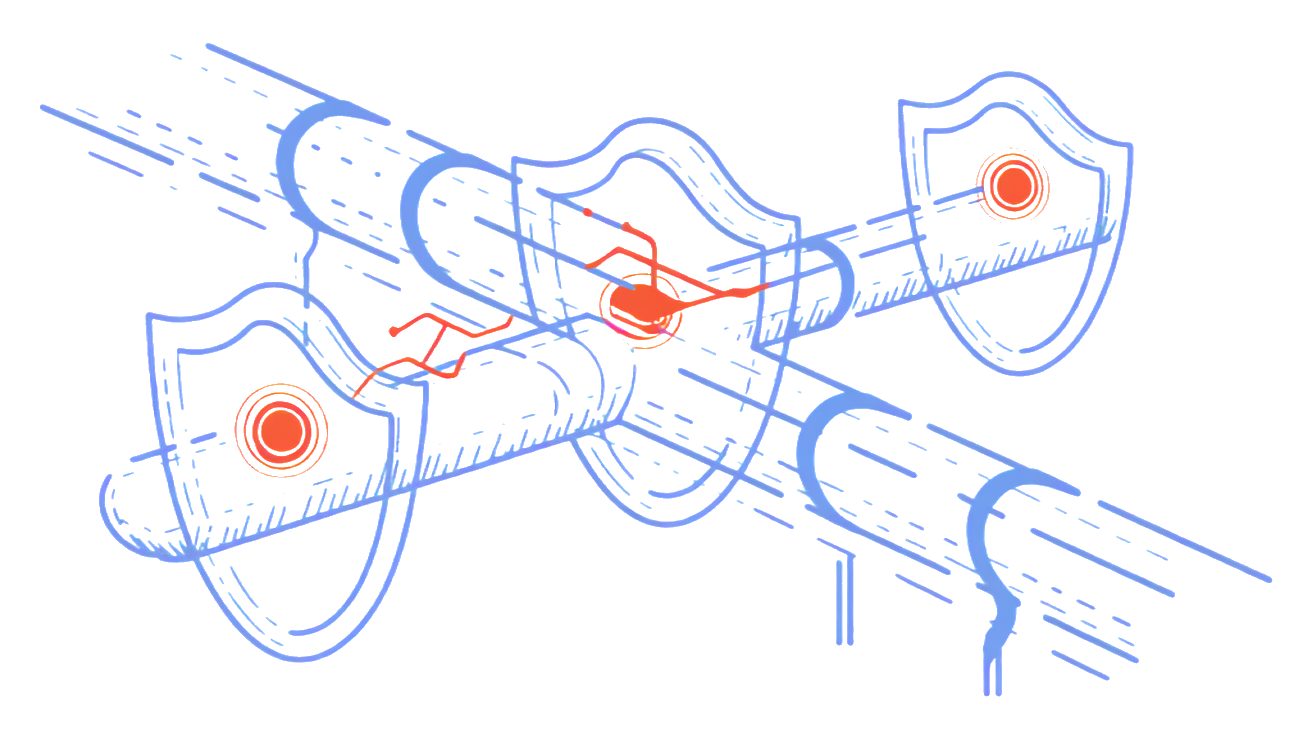

Separate Synthetic from Production Pipelines

Mixing synthetic and real data pipelines is a recipe for disaster. Keep them strictly separated. Synthetic pipelines should run in isolated environments with no direct write access to production models or data stores. This separation limits the blast radius if synthetic data is compromised. It also simplifies troubleshooting and forensic analysis. Use containerization or dedicated cloud projects to enforce this boundary. This way, any adversarial payload hidden in synthetic data stays quarantined. Provenance tags, metadata that tracks data origin and transformations, must be embedded at every stage. They act like digital fingerprints, enabling you to trace back any anomaly to its synthetic source. This approach is a proven best practice in 2026 AI pipeline hardening.

Implement Provenance Tags and Access Controls

Provenance is your audit trail and your early warning system. Every dataset, synthetic or real, needs immutable provenance tags that record origin, generation method, and usage permissions. Combine this with strict access controls. Only authorized users and systems should handle synthetic data, and their actions must be logged. Role-based access and just-in-time permissions reduce insider threats and accidental contamination. Provenance tags also enable automated policy enforcement, if data lacks proper tags, it gets quarantined or rejected. This governance layer is

Continuous Monitoring and Least-Privilege Access: Enforce Security at Every Stage

Real-Time Behavior Analytics for Training and Inference

Security isn’t set-and-forget. Continuous vigilance is your frontline defense. Real-time behavior analytics track anomalies during both training and inference. This means spotting unusual data flows, unexpected model outputs, or suspicious API calls as they happen. Instead of waiting for a breach, you catch adversarial manipulations early. These analytics feed automated alerts and can trigger immediate containment actions, reducing the attack surface drastically. In 2026, this proactive monitoring is no longer optional but a baseline requirement for AI pipeline security SentinelOne.

Strong Authentication and Access Policies

Locking down access is just as critical as spotting threats. Implement strong authentication methods, multi-factor authentication, hardware tokens, or biometric verification, for every user and system interacting with your AI assets. Combine this with least-privilege access policies that grant only the minimum permissions necessary for tasks. This limits damage from compromised credentials or insider threats. Just-in-time access provisioning further tightens control by granting temporary permissions only when needed. Together, these controls form a robust gatekeeper system that keeps adversaries out and insiders accountable.

Tagging Models and Data for Sensitivity and Compliance

Tagging isn’t just metadata, it’s a security enabler. Assign sensitivity and compliance tags to every model, dataset, and integration point. These tags inform your monitoring tools and access controls about the risk level and regulatory requirements tied to each asset. For example, a model trained on personal health data gets stricter access and more frequent audits than a generic recommendation engine. Automated policies then enforce quarantines or additional scrutiny on untagged or improperly tagged assets. This granular governance closes gaps adversaries exploit and ensures compliance with evolving regulations SentinelOne.

Integrating FAQs Into Your AI Pipeline Security Strategy

How can I detect adversarial attacks early in my AI pipeline?

Early detection hinges on continuous monitoring paired with anomaly detection systems tuned specifically for AI behavior. Look for subtle shifts in input patterns or output confidence scores that deviate from historical baselines. Integrate automated alerts that flag suspicious data or model responses before they propagate downstream. Combining behavioral analytics with periodic manual reviews helps catch what automated systems might miss. Don’t wait for a breach to react, build detection into every stage of your pipeline.

What are best practices for managing synthetic data securely?

Treat synthetic data with the same rigor as real data. Implement strict tagging and provenance tracking so you always know the origin and purpose of each synthetic dataset. Enforce policies that limit how synthetic data is generated, shared, and used in training or testing. Regularly audit synthetic data for biases or artifacts that adversaries could exploit. Remember, synthetic data governance isn’t just compliance, it’s a frontline defense against poisoning and evasion attacks.

Which access controls are critical to prevent unauthorized AI data access?

Granular role-based access control (RBAC) is non-negotiable. Limit data access by job function and enforce the principle of least privilege. Combine RBAC with multi-factor authentication (MFA) and session monitoring to reduce insider threats and credential misuse. Automated policy enforcement should quarantine or flag any access attempts that deviate from normal patterns. The tighter your access controls, the fewer opportunities adversaries have to infiltrate your AI pipeline.

Addressing these common concerns head-on not only strengthens your defenses but also accelerates secure AI deployment. When your team understands the why and how, implementation becomes a shared priority, not just a checkbox.