The Challenge of Interpretability in AI Safety

Interpretability is essential for AI safety because it enables you to understand how large language models generate outputs, revealing internal mechanisms that could produce deception, bias, or harmful content. Without clear insight into a model’s decision-making process, detecting these risks remains guesswork. This lack of transparency impedes efforts to audit models effectively and to enforce regulatory compliance. As models grow larger and more complex, the need for precise interpretability tools becomes urgent to ensure that AI systems behave as intended and do not cause unintended harm. For a detailed overview of current capabilities, see What We Can Actually See Inside LLMs Now.

Previous interpretability methods have struggled with scale and clarity, often producing abstract or partial explanations that do not translate into actionable safety measures. Techniques like feature attribution or probing provide limited visibility into the millions of parameters and their interactions. This opacity has hindered practical auditing and reduced confidence in deploying models in sensitive contexts. Sparse autoencoders, by contrast, offer a path to extract millions of discrete, interpretable features, transforming interpretability from theoretical research into a practical audit tool, as discussed in LLM Interpretability as an Audit Tool. This breakthrough sets the stage for the next section on how sparse autoencoders reveal these features and their implications for AI safety.

The Breakthrough: Extracting 34 Million Features from Claude Using Sparse Autoencoders

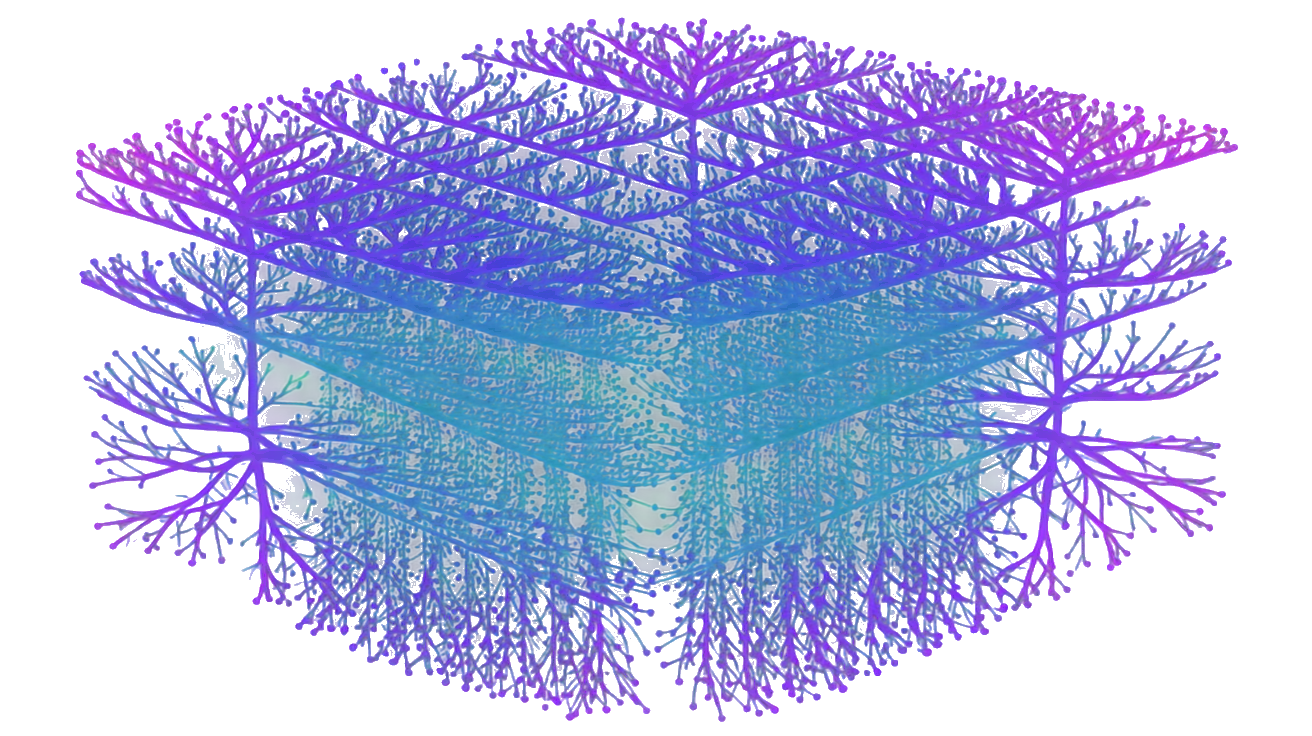

Sparse autoencoders are neural networks designed to learn efficient, interpretable representations by enforcing sparsity constraints on their hidden layers. This means they activate only a small subset of neurons for each input, isolating distinct features that correspond to meaningful concepts within the model’s internal states. Anthropic applied sparse autoencoders to Claude 3 Sonnet, extracting over 34 million discrete, monosemantic features that explain more than 65% of the variance in the model’s activations while activating fewer than 300 features per token. This sparsity enables a compact, human-understandable mapping of the model’s internal processes, a significant improvement over previous dense or entangled representations that obscured interpretability Anthropic: Scaling Monosemanticity.

These extracted features cover a wide range of categories, including entities, code structures, and critically, safety-relevant signals such as deception, sycophancy, bias, code backdoors, and dangerous content. This granularity allows you to pinpoint exactly how Claude represents and processes potentially harmful or manipulative behavior, making it a practical tool for auditing and enforcing safety measures. By transforming mechanistic interpretability into a scalable, actionable framework, sparse autoencoders directly address the challenges outlined in What We Can Actually See Inside LLMs Now and enable the kind of rigorous oversight necessary for compliance, as discussed in LLM Interpretability as an Audit Tool. This foundation sets up the next discussion on the broader implications for AI safety and regulatory frameworks.

Why Mechanistic Interpretability Is Now Practical for Large Language Models

From Theory to Scalable Tools

Mechanistic interpretability has transitioned from theoretical frameworks to practical, scalable tools through advances in visualization and analysis software. Key tools include:

- TransformerLens: Enables detailed inspection of attention patterns and neuron activations across transformer layers.

- SAE-Vis: Visualizes sparse autoencoder features, highlighting discrete, monosemantic concepts within model activations.

- Neuronpedia: Provides a searchable database of neuron functions and feature mappings, accelerating feature identification and cross-model comparisons.

These tools allow you to analyze millions of features efficiently, making mechanistic interpretability feasible for large models like Claude. This scalability supports rigorous safety audits and compliance checks, as outlined in LLM Interpretability as an Audit Tool. The practical availability of these tools marks a turning point from abstract research to actionable oversight, addressing challenges discussed in What We Can Actually See Inside LLMs Now.

Cross-Lingual and Multimodal Feature Validation

Sparse autoencoder features have been validated across multiple languages and modalities, confirming their robustness and generality. Anthropic’s research tested features in:

- English, Japanese, Chinese, Greek, Vietnamese, Russian

- Image inputs integrated with language features

This cross-lingual and multimodal validation ensures that interpretability tools apply beyond English text, crucial for global AI safety standards. It also enables detection of harmful or biased behavior regardless of input language or format, enhancing audit effectiveness. These advances contribute to reducing hallucination rates and improving model reliability, as seen in Hallucination Rates Dropped From 20% to Under 4%.

This practical, validated framework for mechanistic interpretability sets the stage for exploring its broader implications on AI safety and regulatory compliance.

Implications for AI Safety and Regulatory Compliance

Detecting Safety-Related Features

The extraction of over 34 million interpretable features from Claude includes critical safety-related categories such as deception, sycophancy, bias, code backdoors, and dangerous content, as documented by Anthropic’s research Anthropic Research. This granularity enables you to detect and analyze harmful behaviors at a mechanistic level rather than relying on surface-level outputs or heuristic tests. By pinpointing how these risks manifest internally, you can implement targeted interventions to mitigate them before deployment. This capability directly addresses the interpretability challenges outlined in What We Can Actually See Inside LLMs Now and transforms mechanistic interpretability into a practical safety tool, as further discussed in LLM Interpretability as an Audit Tool.

Moreover, the ability to track safety-relevant features correlates with measurable improvements in model reliability, such as reducing hallucination rates from 20% to under 4% Hallucination Rates Dropped From 20% to Under 4%. This demonstrates that mechanistic insights gained through sparse autoencoders do not remain theoretical but translate into concrete safety gains. You can therefore use these features to continuously monitor and refine model behavior, ensuring safer AI deployment in high-stakes environments.

Meeting EU AI Act Transparency Requirements

The EU AI Act mandates transparency about AI system behavior, specifically requiring providers to disclose how models operate and manage risks (Article 13) EU Digital Strategy. Sparse autoencoder-derived features provide the detailed, interpretable evidence needed to fulfill these transparency obligations. By exposing discrete, monosemantic features linked to safety risks, you can produce audit trails and documentation that regulators demand. This level of mechanistic interpretability supports compliance beyond generic explanations, offering concrete proof of internal model processes.

This transparency also facilitates external audits and third-party verification, critical components of regulatory frameworks. The practical interpretability tools discussed in LLM Interpretability as an Audit Tool enable you to meet these requirements efficiently, reducing regulatory friction and building trust with stakeholders. Together, these advances position mechanistic interpretability as a foundational element for responsible AI governance and safety assurance.

These implications highlight the transformative potential of sparse autoencoders for AI safety and compliance. Next, we will explore how these interpretability breakthroughs influence the future of AI development and oversight.

Advancing AI Safety Research and Production Practices

Using Interpretability as an Audit Tool

Mechanistic interpretability tools have become indispensable for auditing large language models in safety-critical contexts. By revealing discrete, monosemantic features within models like Claude, these tools enable researchers and engineers to move beyond surface-level testing and heuristic evaluations. Instead, you can inspect the internal representations that drive model behavior, identifying potential failure modes or unsafe patterns before deployment. This capability transforms interpretability from a theoretical exercise into a practical audit framework, as detailed in LLM Interpretability as an Audit Tool. It empowers teams to conduct systematic, repeatable safety assessments that align with regulatory expectations and internal risk management protocols.

This approach also facilitates collaboration between research and production teams by providing a shared, transparent language for discussing model behavior. It bridges gaps between abstract model internals and concrete safety concerns, accelerating the identification and mitigation of risks. For a broader context on how interpretability reveals model mechanisms, see What We Can Actually See Inside LLMs Now. Overall, interpretability as an audit tool strengthens the feedback loop between model development, safety validation, and operational deployment.

Reducing Hallucination and Operational Risks

Mechanistic insights derived from sparse autoencoders contribute directly to reducing hallucination rates and other operational risks in deployed AI systems. By pinpointing features associated with unreliable or unsafe outputs, you can implement targeted interventions such as fine-tuning, feature suppression, or dynamic monitoring. These interventions have demonstrably lowered hallucination rates from 20% to under 4% in practical settings, underscoring the real-world impact of interpretability-driven safety measures Hallucination Rates Dropped From 20% to Under 4%.

Beyond hallucinations, interpretability tools help detect subtle failure modes like sycophantic behavior or latent biases that traditional evaluation methods might miss. This proactive identification reduces operational risks, improves user trust, and supports safer AI integration across industries. Together, these advances establish mechanistic interpretability as a cornerstone of robust AI safety research and production practices. The next section will examine how these breakthroughs shape the future of AI development and oversight.

DeepMind’s Gemma Scope: Scaling Sparse Autoencoders to 30 Million Features

Gemma 2 Model and Compute Requirements

DeepMind’s Gemma Scope project applied sparse autoencoders at unprecedented scale to the Gemma 2 language model, extracting over 30 million discrete features from more than 400 trained sparse autoencoders DeepMind Blog. This feat required approximately 15% of the total compute used to train the 9-billion-parameter Gemma 2 model, highlighting the significant but feasible resource investment for large-scale mechanistic interpretability. The project also involved storing around 20 petabytes of model activations, underscoring the data-intensive nature of this approach. These technical demands demonstrate that sparse autoencoder analysis can scale to state-of-the-art models, moving interpretability from small-scale experiments to production-level audits.

This scale of analysis aligns with the practical interpretability frameworks discussed in What We Can Actually See Inside LLMs Now and LLM Interpretability as an Audit Tool. By leveraging substantial compute and storage, Gemma Scope provides a detailed, monosemantic feature map that supports rigorous safety evaluations and continuous monitoring. The project’s success confirms that mechanistic interpretability can be integrated into real-world model development pipelines, enabling safety teams to detect and mitigate risks with unprecedented granularity.

Implications for the Safety Community

Gemma Scope’s demonstration of large-scale sparse autoencoder feasibility marks a critical milestone for the AI safety community. Extracting 30 million features from a single model enables fine-grained detection of safety-relevant behaviors, including subtle failure modes that traditional testing might overlook. This capability enhances the precision of audits and supports proactive interventions to reduce operational risks, such as hallucinations or bias, complementing improvements documented in Hallucination Rates Dropped From 20% to Under 4%.

Moreover, Gemma Scope sets a precedent for transparency and accountability in AI development by providing interpretable evidence that can satisfy regulatory demands and stakeholder scrutiny. Its resource requirements, while substantial, are within reach for leading AI labs, suggesting that widespread adoption of sparse autoencoder interpretability is imminent. This progress strengthens the foundation for mechanistic interpretability as a core component of AI governance, safety assurance, and compliance frameworks. The next section will explore how these advances influence ongoing AI safety research and production practices.

Conclusion: Next Steps for Researchers, Engineers, and Policymakers

Integrating Interpretability into AI Development

Researchers and engineers must prioritize embedding sparse autoencoder interpretability into AI development workflows to achieve safer, more reliable models. Incorporating mechanistic interpretability tools early in the training and fine-tuning phases enables continuous monitoring of millions of discrete features, allowing you to detect and address risks such as hallucinations, bias, and deceptive behaviors before deployment. This proactive approach moves beyond heuristic testing and surface-level evaluation, as detailed in What We Can Actually See Inside LLMs Now. Engineering teams should adopt scalable visualization and analysis platforms to maintain transparency throughout the model lifecycle, reinforcing the feedback loop between development and safety validation. The measurable reductions in hallucination rates documented in Hallucination Rates Dropped From 20% to Under 4% demonstrate the tangible benefits of this integration.

Policy and Compliance Considerations

Policymakers and compliance officers should recognize sparse autoencoder interpretability as a critical enabler of AI transparency and accountability. Regulatory frameworks like the EU AI Act demand detailed evidence of model behavior and risk management, which mechanistic interpretability can provide through discrete, monosemantic feature mappings. Incorporating these tools into audit protocols supports rigorous oversight and third-party verification, reducing regulatory uncertainty and fostering trust among stakeholders. As outlined in LLM Interpretability as an Audit Tool, this interpretability paradigm shifts compliance from abstract reporting to concrete, actionable insights. Policymakers should encourage standards that mandate or incentivize the use of scalable interpretability techniques to ensure responsible AI deployment globally.

Together, these next steps position sparse autoencoder interpretability as a foundational technology for the future of AI safety and governance. The following section will explore how ongoing research continues to expand the capabilities and applications of mechanistic interpretability.