How Vector Database Choice Directly Impacts AI App Latency and Accuracy

Imagine your AI app’s response time tripling just because of the vector database you picked. Or worse, your model’s accuracy slipping noticeably, frustrating users and skewing results. These aren’t hypothetical risks. The choice of vector database can cause latency differences up to 3x and accuracy drops exceeding 10% in real-world deployments. That’s a game changer for any AI-driven product where speed and precision matter.

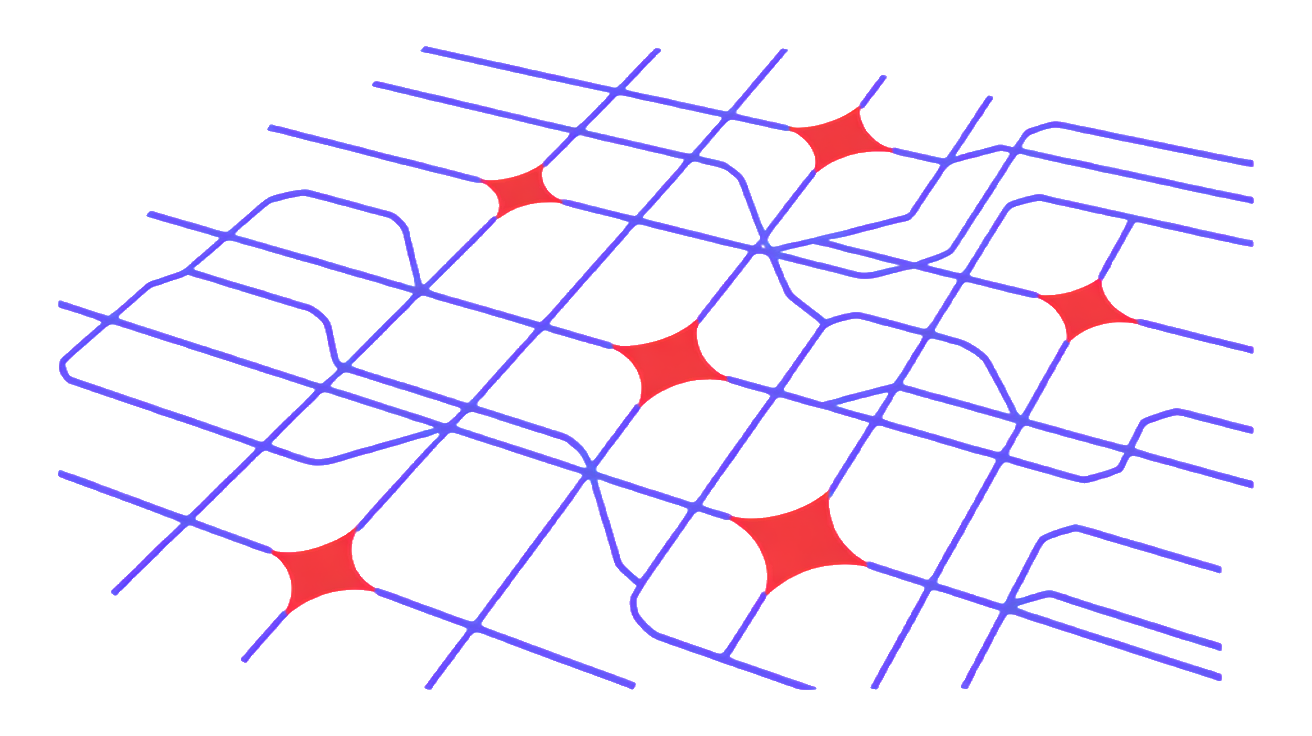

Why does this happen? Each vector database uses distinct indexing algorithms, distance metrics, and query optimizations. Some prioritize ultra-fast retrieval but sacrifice recall, while others deliver higher accuracy at the cost of slower responses. Your app’s architecture, data distribution, and query patterns interact with these trade-offs in complex ways. In short, the vector database is not just a backend detail, it directly shapes your AI’s user experience and model effectiveness. Choosing the right one means balancing your specific needs: whether that’s lightning-fast searches, top-tier accuracy, or a middle ground. Ignore this, and you risk bottlenecks and degraded insights that no amount of model tuning can fix.

Feature Matrix: Indexing, Scalability, Hybrid Search, and Cloud Support Compared

| Feature | Pinecone | Weaviate | Qdrant |

|---|---|---|---|

| Indexing Algorithms | Uses optimized approximate nearest neighbor (ANN) indexes with a focus on HNSW for fast, scalable search. Supports dynamic updates without full reindexing. | Employs HNSW and supports custom modules for specialized indexing strategies. Allows schema-based vectorization pipelines. | Implements HNSW and IVF variants, optimized for both dense and sparse vectors. Offers flexible payload filtering integrated with indexing. |

| Horizontal Scalability | Designed for managed cloud scaling, automatically handling sharding and replication. Scales smoothly with minimal user intervention. | Supports distributed clusters with manual node management. Good for on-prem and hybrid deployments, but requires operational overhead. | Built for containerized environments with native support for horizontal scaling. Offers fine-grained control over cluster topology. |

| Hybrid Vector-Metadata Search | Provides integrated filtering combining vector similarity with metadata constraints. Query language supports boolean and range filters. | Strong hybrid search with rich filtering, including nested and geo queries. Schema-driven metadata enables complex queries. | Supports hybrid search with payload-based filtering tightly coupled to vector search. Flexible but less expressive than Weaviate’s schema system. |

| Cloud and Platform Support | Fully managed multi-cloud SaaS with integrations for major cloud providers. Offers SDKs in multiple languages and REST APIs. | Open-source with cloud-native deployment options and managed service available. Supports Kubernetes and popular cloud platforms. | Open-source with strong Kubernetes support and cloud-agnostic deployment. Focus on self-hosting but also offers managed options. |

Each vector database shines in different areas. Pinecone excels at hassle-free cloud scaling and simple hybrid queries. Weaviate’s schema and filtering capabilities suit complex metadata-rich applications. Qdrant offers flexibility for containerized, self-managed environments with strong filtering tied to indexing. Your choice should align with your project’s operational model and query complexity. For more on production challenges, see Why Most AI Agent Projects Stall Before Production.

Performance Benchmarks: Query Latency and Throughput Across Use Cases

When it comes to real-time recommendations, Pinecone consistently shines with its optimized infrastructure for low-latency vector search. Its managed service design minimizes overhead, delivering smooth query responses even as load scales. Weaviate performs well here too, especially when leveraging its modular architecture and GPU acceleration, but may introduce slightly higher latency under heavy concurrent queries. Qdrant’s self-hosted model offers solid throughput, but latency can vary depending on your hardware and deployment configuration.

For semantic search, Weaviate’s rich filtering and hybrid search capabilities give it an edge in complex queries involving metadata. Pinecone maintains fast response times but focuses more on pure vector similarity, while Qdrant balances speed and filtering well, especially when tuned for your specific dataset. In AI agent memory workloads, where frequent updates and rapid retrieval are critical, Qdrant’s flexible indexing and container-friendly setup provide adaptability. Pinecone’s managed environment simplifies scaling, but may limit customization. Weaviate’s extensibility supports diverse use cases but requires more tuning to optimize throughput under heavy write/read cycles.

| Use Case | Pinecone | Weaviate | Qdrant |

|---|---|---|---|

| Real-Time Recs | Very low latency, high throughput | Low latency, GPU-accelerated | Moderate latency, self-managed |

| Semantic Search | Fast vector similarity | Rich filtering, hybrid search | Balanced speed and filters |

| AI Agent Memory | Managed scaling, less customizable | Extensible, needs tuning | Flexible indexing, container-friendly |

Choosing the right vector database means balancing latency, throughput, and operational control based on your workload patterns.

Integration Complexity and Scaling Strategies with Code Examples

SDK maturity and API ergonomics vary significantly across Pinecone, Weaviate, and Qdrant. Pinecone offers a polished, minimalistic SDK that gets you up and running with just a few lines of code. Its client libraries emphasize simplicity, abstracting away much of the complexity around connection management and retries. Weaviate’s SDK is more feature-rich but requires a steeper learning curve to unlock advanced filtering and hybrid search capabilities. Qdrant strikes a middle ground, providing a flexible REST API and gRPC support that appeals to teams comfortable with container orchestration and custom scaling.

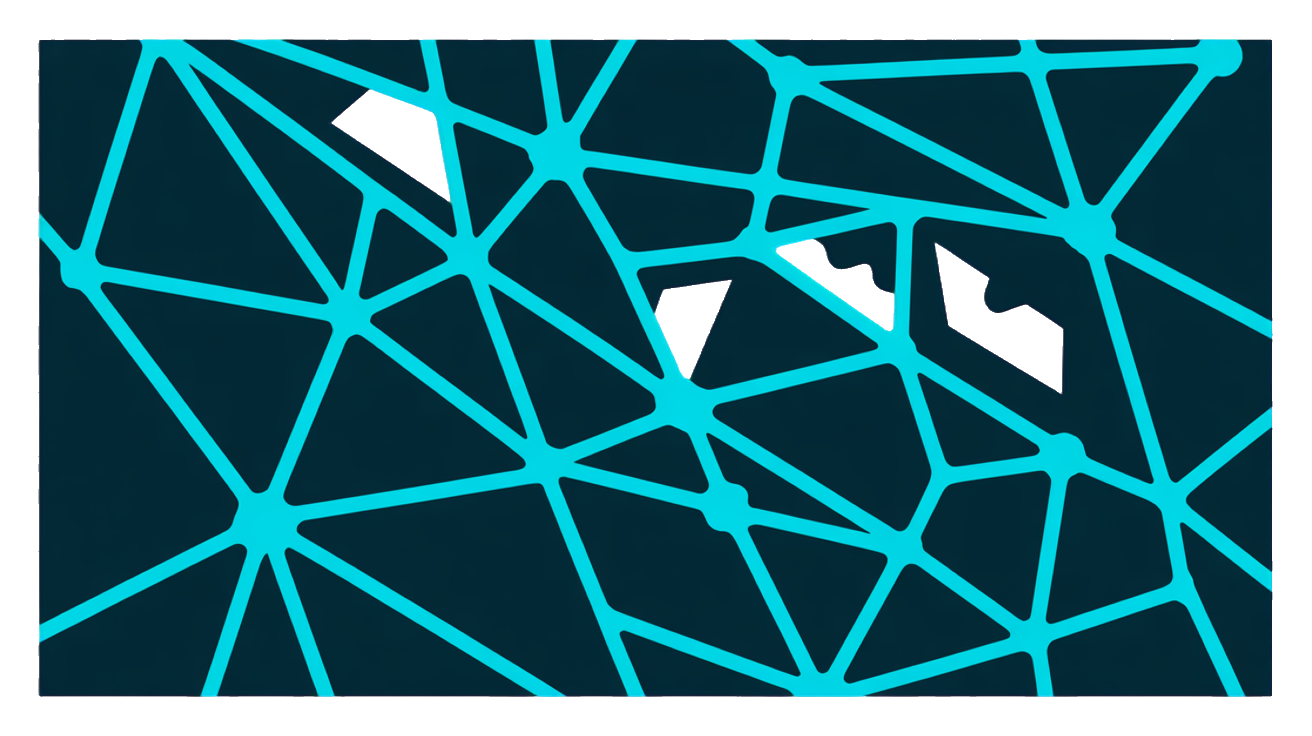

Horizontal scaling strategies differ too. Pinecone handles scaling transparently in its managed environment, so you focus on your queries. Weaviate supports distributed clusters but demands more operational oversight. Qdrant’s container-friendly design lets you scale by adding nodes and managing shards yourself, which suits teams wanting fine-grained control.

Here’s a quick example of batch indexing with Pinecone’s Python SDK:

import pinecone

pinecone.init(api_key="your-api-key", environment="us-west1-gcp")

index = pinecone.Index("my-index")

vectors = [

("id1", [0.1, 0.2, 0.3]),

("id2", [0.4, 0.5, 0.6]),

]

index.upsert(vectors)For Qdrant, batch indexing via REST API looks like this:

curl -X POST "http://localhost:6333/collections/my_collection/points" \

-H "Content-Type: application/json" \

-d '{

"points": [

{"id": 1, "vector": [0.1, 0.2, 0.3]},

{"id": 2, "vector": [0.4, 0.5, 0.6]}

]

}'Failover handling in Weaviate often involves client-side retries combined with cluster health checks, reflecting its open architecture. Your choice here depends on whether you want managed simplicity or operational flexibility.

Pricing Models and Cost Efficiency: What You Pay for at Scale

Vector databases don’t just charge for storage and queries. The pricing tiers often reflect a mix of factors: volume of vectors indexed, query throughput, and additional features like hybrid search or multi-region replication. Some platforms offer a straightforward flat rate per vector or per query, while others use more complex models based on resource consumption, such as CPU or memory usage during indexing and querying.

Watch out for hidden costs. Data egress fees can surprise you if your app frequently moves vectors or query results across regions or out of the cloud provider’s network. Also, costs for backups, data retention beyond a certain period, or advanced security features may not be included in the base price. These can add up, especially at scale.

Cost efficiency is about more than just sticker price. Consider the cost-performance tradeoff. A cheaper service with slower query times or less accurate results might force you to overprovision or build additional caching layers, increasing your total cost of ownership. Conversely, a premium option with optimized indexing and query engines could save money by reducing infrastructure needs and improving user experience.

Ultimately, your vector DB budget forecast should factor in expected query volume, vector dimensionality, and operational overhead. Running a small proof of concept with your real workload is the best way to reveal the true cost dynamics before committing.

Frequently Asked Questions

How do Pinecone, Weaviate, and Qdrant differ in handling large-scale vector data?

Pinecone leans on a fully managed, cloud-native approach that scales seamlessly with minimal ops overhead. It’s great if you want a hands-off experience and predictable performance at scale. Weaviate offers a flexible, modular architecture that supports distributed deployments, making it a solid choice when you need tight control over scaling and customization. Qdrant shines with its open-source roots, allowing deep tuning and on-premises setups, ideal if you want to optimize for cost or compliance. Your choice depends on whether you prioritize ease of scaling, customization, or infrastructure control.

Which vector database offers the best support for hybrid search combining vectors and metadata?

Weaviate stands out here with built-in semantic search capabilities that tightly integrate vector similarity and rich metadata filtering. Its schema-driven design simplifies complex queries mixing structured data and vectors. Pinecone supports hybrid search but typically requires external metadata management, adding complexity. Qdrant also supports hybrid search with flexible filtering but may need more manual setup to optimize combined queries. If hybrid search is critical, weigh how much integration work you want versus out-of-the-box support.

What are common pitfalls when migrating between these vector databases?

Data format incompatibilities and differing index structures often cause headaches during migration. Expect to re-index vectors and adapt your query logic to each platform’s API nuances. Metadata handling varies widely, so plan for schema adjustments and potential data loss if not carefully managed. Performance characteristics can shift, requiring new benchmarks and tuning. Finally, integration points like authentication and scaling mechanisms may need rework. A staged migration with thorough testing is your safest bet to avoid surprises.